AI: A Tale of Two AI Questions. RTZ #1026

The Bigger Picture, Sunday, March 15, 2026

As I’ve articulated for months now, the AI Tech Wave right now faces the biggest uncertainties from investors around the following two key questions.

-

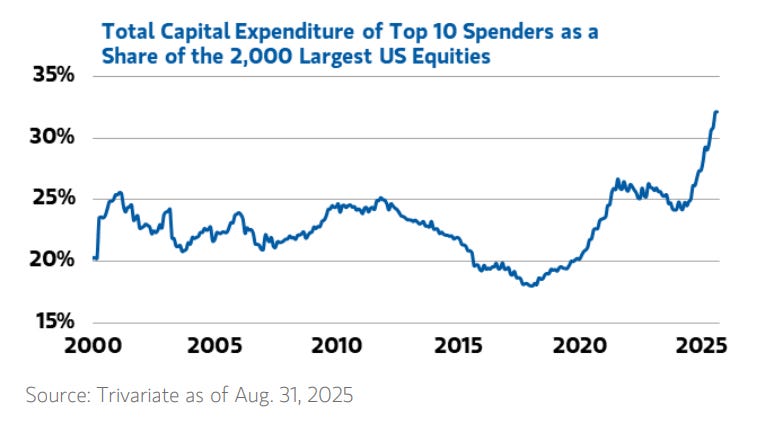

Will the unprecedented investments in AI Data Centers to ramp both AI training and inference compute to generate massive amounts of intelligence tokens be so ahead of mainstream demand that revenues, profits, margins and free cash flow often lag the front-loaded investments in AI capex of all forms, from chatbots to agents and beyond?

-

And if the demand does come through from mainstream businesses and consumers, will the variable cost nature of AI inference compute overwhelm the rapidly growing revenues of AI leaders like OpenAI, Anthropic and other LLM AI companies?

The Bigger Picture I’d like to focus on this Sunday, is outline why the probabilities favoring the answers to both questions as ‘Probably, before they both get better’. Two opposite things can be true at more or less the same time.

The devil being in the delta between the ‘more or less’ above. Current assessments of the underlying technologies indicate that investors will not know ‘what to make of AI for a while yet’, as the Economist outlines this week.

Let’s unpack the drivers behind these probabilities.

Axios frames both answers around the AI training and inference compute ramp reality in “Don’t get used to cheap AI”:

“AI may never be as cheap to use as it is today.”

“Why it matters: AI companies are hooking users with low prices that won’t last — straight out of the Amazon and Uber playbook.”

Of course the questions are louder ahead of unprecedentedly large mega-IPOs being planned this year by the three biggest AI companies today, SpaceX/xAI, OpenAI and Anthropic. WIth many others in the wings.

“The big picture: The push to show profits before IPOs could end the era of cheap AI.”

“These LLM companies are going to go public and they’re going to raise prices because they have to,” May Habib, CEO of Writer, told Axios.”

The current direction of performance and pricing are both positive for now:

“State of play: New models from OpenAI, Google and Anthropic are generally getting faster and cheaper.”

“The industry was fixated on training chips. Now Nvidia and its rivals are focused on inference — the computing that lets models answer your questions.”

“Aggregate token pricing — the cost of generating text — has fallen, partly due to a massive efficiency jump in inference.”

We’ll see more on the last two points above from Nvidia founder/CEO Jensen Huang’s keynote this week at their annual GTC 2026 confab in California. Going through Nvidia’s long trek ahead.

“Driving the news: Nvidia is expected to unveil a more efficient AI chip at its developer conference next week, according to reports.”

“As prices fall, usage is surging — and total corporate AI spending is rising, according to Ramp, which tracks business expenses.”

But investors are fretting about the math not currently mathing at the current moment:

“Zoom in: Yet margins are still negative for AI labs, according to PitchBook.”

The hyperscaler cloud companies have similar issues, from Amazon AWS to Microsoft to Google and beyond. Microsoft’s margins for instance have gone from the 70s to the high 60s, with the recent guidance pointing to the mid-60s. And other companies like Meta are joining in the ‘spend it ‘till you drop’ race.

Yet investors are still leaning into it all:

“Zoom out: Fierce competition has pushed labs to price aggressively, squeezing profits.”

“In February, 90% of VC funding dollars went to AI startups. OpenAI and Anthropic alone captured 74% of VC dollars, according to Crunchbase.”

“Labs also get discounted compute through strategic partnerships — sometimes described on Wall Street as circular financing. Microsoft reportedly provides OpenAI compute at below-market rates.”

“Even with those discounts, OpenAI and Anthropic are still losing money.”

Again, it’s the variable cost race as described above.

“Between the lines: Every time you send a complex query, the AI lab is effectively losing money on the transaction.”

“Free accounts have limited token use, which is expanded when you sign up for a standard consumer subscription. But those low-cost subscriptions are among the most heavily subsidized.”

“In February, 28% of corporate OpenAI chat spending flowed through personal consumer plans rather than higher-margin enterprise tiers, according to Ramp.”

“Ramp’s data shows Anthropic capturing the majority of tracked business AI spend.”

And this reality has prior precedents, only with fewer zeroes.

“Flashback: Silicon Valley has seen this movie before.”

“The so-called “millennial lifestyle subsidy“ meant VC money helped underwrite cheap Uber rides and DoorDash deliveries.”

“Before that, Amazon built its base with low prices, free shipping and, for years, no sales tax in most states.”

“Eventually, all of these companies had to charge enough to cover costs — and make a profit.”

The question is how fast does the era of ‘circular financings and deals’ continue before the music pauses and/or stops:

“Follow the money: The current iteration of AI subsidies won’t last forever.”

“Both OpenAI and Anthropic are widely expected to go public. Public investors will demand earnings growth and expanding margins.”

“Even as chips get more efficient, total spending keeps rising. Labs need more capacity, more upgrades and more supply to meet demand.”

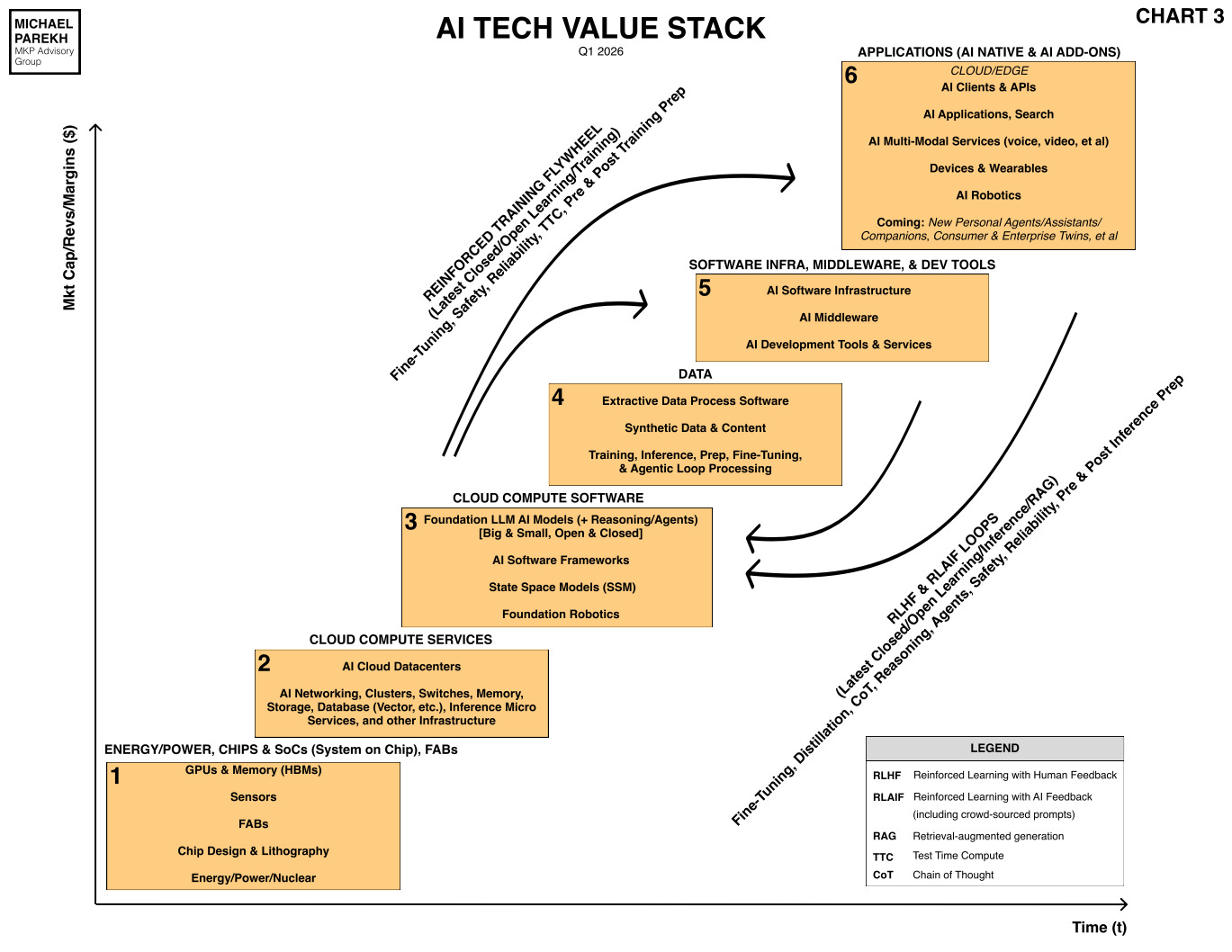

Thus the above ‘Probably, before they both get better’ answers above, for now. For most of the companies up and down the AI Tech Stack below:.

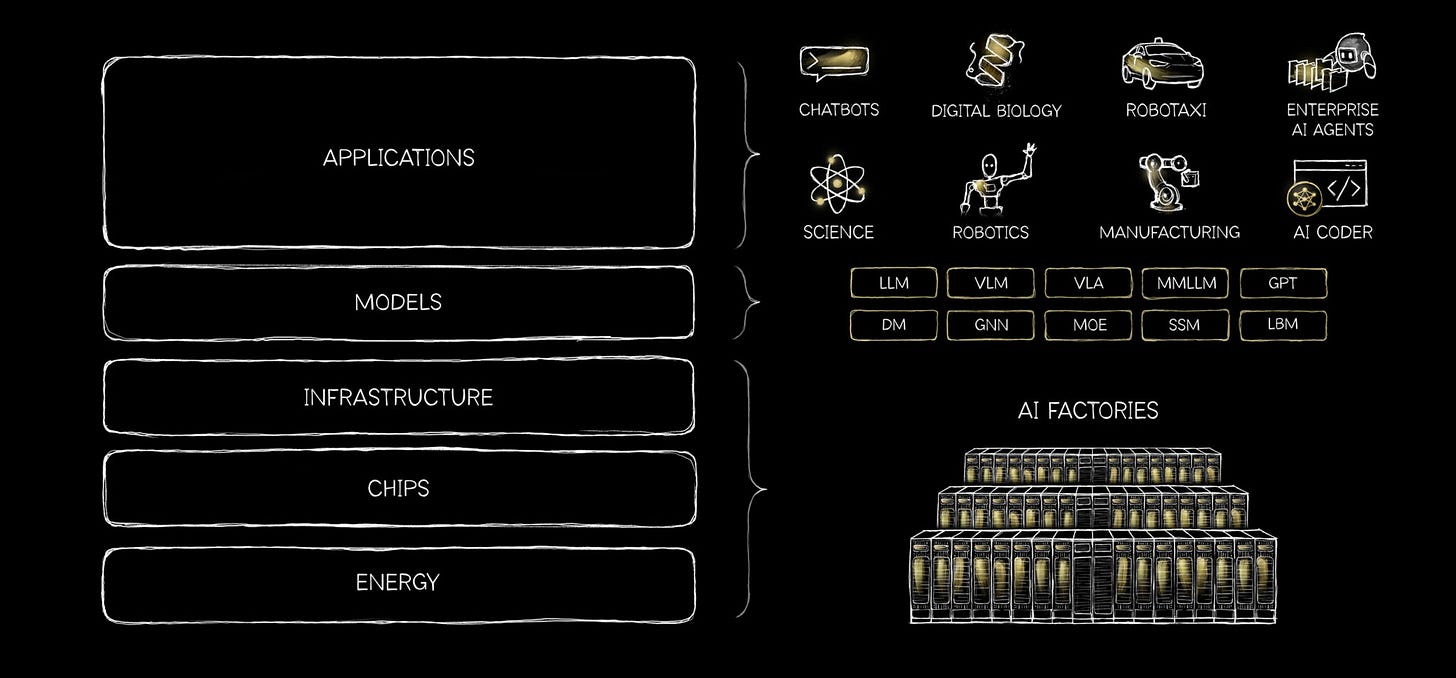

What Nvidia’s Jensen Huang describes as the ‘5-Layer AI Cake’:

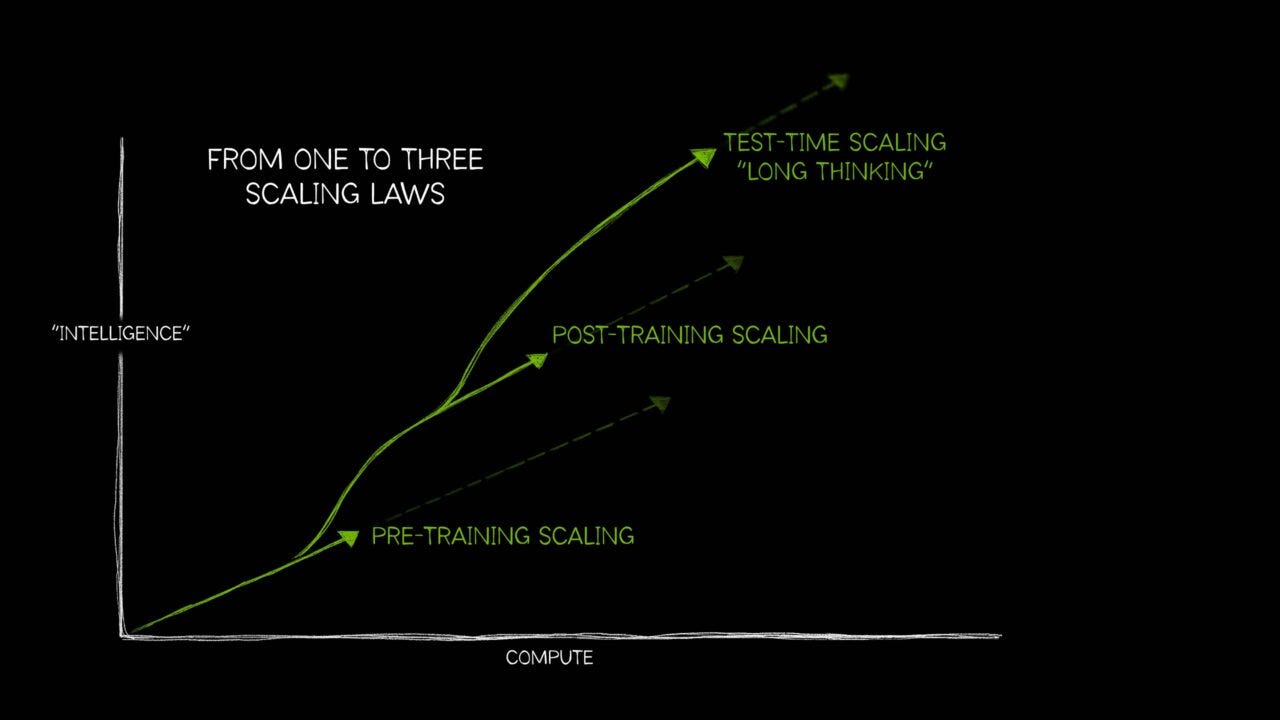

It’s the 6-box AI Tech Stack above without the critical ‘Data’ Box 4in my yellow chart above. And the reinforcement training and inference learning curves that drive the whole AI thing this time vs prior tech waves. To make it all Scale at the levels needed. For a long time ahead.

Going back to the Axios piece:

“The bottom line: The costs of AI will keep going down.”

“But total spend from customers will need to keep going up if AI companies are going to become profitable and investors are ever going to get returns on their massive investments.”

It’s useful taking a look again at the Bigger Picture of the two key questions above, at this point in the AI Tech Wave.

Especially with some big public market debuts ahead. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)