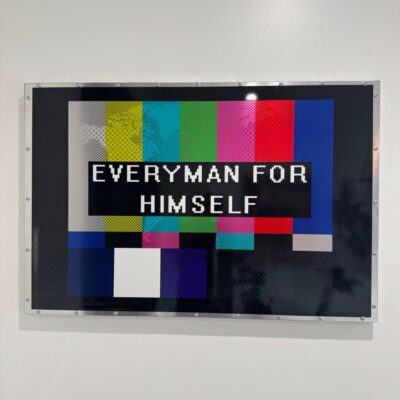

AI finding its way out of it's 1980s MS-DOS user interface stage. ARD #74

The frame running through every item today: AI is hunting for its truly mainstream user-interface and user-experience (UI/UX) fit — blending typing, voice, and rich graphical representations into something a mainstream user can actually live in and use. Without knowing more tech vocabulary and usage skills.

Here’s what ties today’s three Takes together:

AIs in the last three years since ChatGPT are evolving from a chatbot experience in a “command line” window to AI Agents run from a command line window.

It’s AI stuck in the “MS-DOS era” of PCs in the 1980s. We’ve not gotten to Windows and Mac graphical interfaces yet.

New thing, Voice AI, is currently mostly separated from the keyboard-driven AI interface. New innovations by big and small tech companies are trying to change this fast.

Worth a brief reminder before diving in: when OpenAI introduced its voice modality inspired by the movie Her, the objective was to make the AI feel more human — friendly voices, conversational warmth, personality.

That’s a business strategy, not an accident. Companies are leveraging the human tendency to anthropomorphize anything that looks and feels human — the way we bond with pets, or the way Tom Hanks bonded with a volleyball in Cast Away. The warmer the AI, the more you connect, the more you come back. Worth knowing it’s designed-in.

Three Takes today on three specific UI/UX innovations from across the AI stack. Plus a Gadget AI on Google’s new “Googlebook” — ChromeOS + Android + Gemini Intelligence — and two reader questions on Chromebook today vs Chromebook tomorrow:

(1) Thinking Machine Labs Introduces “Time-Aware” Voice-Powered AI Language Models. Thinking Machine Labs (TML) — Mira Murati’s post-OpenAI lab, $2 billion seed at a $12 billion valuation a few months ago — just previewed language models optimized for voice and text together. The framing TML uses: “time-aware,” “fully duplex” interaction models with human users. The demo is near-realtime AI voice + video conversation built on a model architecture designed from the start for human-paced interaction rather than turn-by-turn chatbot exchanges. Response time under 400 milliseconds — meaning the latency feels more natural, human-like. Their demo video shows an engineer at TML speaking in Hindi being live-translated into English on a concurrent basis — a lot of operating systems can do that, but TML’s is a bit more seamless if you listen for the technical points where it’s different from a latency point of view. High-pedigreed AI lab; research-stage; not yet released to users. Worth watching as a separate path from Google, Apple, and OpenAI’s own voice-AI efforts. The reporting: Thinking Machines — Interaction Models. Demo: TML YouTube — Interaction Models. VentureBeat coverage: VentureBeat — Thinking Machines Shows Off Preview of Near-Realtime AI Voice and Video Conversation. Companion context (also cited yesterday Ep 73 Q1): WSJ — Typing Is Being Replaced by Whispering. Standing theses on voice AI: AI-RTZ #492 — OpenAI’s Best and Worst of Times and AI-RTZ #453 — The Voice AI Competition.

MP Take: Fresh take by Thinking Machine Labs (TML) on language models that are optimized for voice and text together. Some interesting ideas here. Google and Apple are also focused on these issues, as is OpenAI.

All the TML efforts here are designed for a truly “time-aware,” “fully duplex” (means two can talk at same time), interaction model with human users. Lots more work needed beyond the current research stage. Model not yet released to users. Definitely an LLM AI experiment worth watching from a high-pedigreed AI lab. The architecture is interesting in how it bakes the UI/UX into the operating system layer of the model itself — kind of analogous to how Windows built on top of MS-DOS, but at the model layer instead of the OS layer. What took better part of a decade in the PC era is likely to take a few years, maybe even a few months, in the AI era.

(2) Anthropic and Andrej Karpathy of “Vibe Coding” Fame — HTML Output for AI Models for Richer Human Visualization Beyond Markdown Files. The pitch: AI agents currently output markdown (.md) files as their default human-readable rendering. That works for developers; it does not work for mainstream users who don’t know what a markdown file is or why it’s the default. Karpathy and Anthropic’s Thariq are independently arguing for html as a step beyond markdown — richer formatting, native browser rendering, easier embedding of images and tables, closer to what a mainstream user expects from “output I can read and share.” Click on a clean HTML output and it renders rich with graphs and charts and colors — very different than what a raw .md markdown file looks like. Karpathy’s framing: Andrej Karpathy on X — HTML-as-AI-output. Anthropic-side from Thariq: Thariq at Anthropic on X — HTML output for AI models. Standing thesis on vibe coding + AI agent UX: AI-RTZ #661 — Developers Loving Vibe Coding.

MP Take: Definitely agree AI Industry needs a visualization mechanism beyond markdown (md) files for current AI Agent apps. Each of the main companies does this differently for humans even though they all rely on md files without explaining what they are and why they’re being used by mainstream users.

Google Gemini will save to docs if asked, which is more readable. Perplexity and OpenAI ChatGPT will save to docx — Microsoft Word doc format — when asked. But it is not a seamless experience. Current AI Agent output is not instantagenously readable by humans, nor is it designed for easy instant human editing and sharing.

Definitely a hole in AI Agent products that needs fixing. Karpathy and Anthropic’s html idea is a step to the solution. But not the solution yet. Most of the output you get today in AI agents assumes you know all the technical terms the model is using — markdown files, context windows, sub-agents — and you’re supposed to learn this stuff on the fly. Same as what early adopters had to do with the early days of the PC in the 80s. We had to crawl through the glass of techie buzzy things and learn that. And that’s where we are with the AI chatbots and agents.

(3) Anthropic Claude’s “Agent View.” Building directly on Take 2’s problem-statement, Anthropic’s Claude Code has introduced an “Agent View” — a graphical surface for watching an AI agent work in something other than the command-line + markdown-file format that defined the first three years post-ChatGPT. It lets you take a whole bunch of the sessions that are running again for regular people and track them more easily, those agents and sub-agents, in a view that is more optimized. It’s a developer-facing solution today (Claude Code is a CLI/IDE tool for developers), but the architectural pattern matters for the broader mainstream-AI UX question. The reporting: Anthropic — Claude Code Agent View Docs. Standing thesis on Anthropic’s UX moves: AI-RTZ #960 — Anthropic’s Moment in the Vibe.

MP Take: This builds on the problem/solution discussed in #2 above. Again, a good interim solution for coders. Anthropic now needs to take this to Cowork, which is built on top of Claude Code, and meant for mainstream users. Definitely an interesting idea and a work in progress. MP will track and revisit in the weeks to come.

Plus: Gadget AI — Google’s New “Googlebook” with ChromeOS and Android in a Single OS, Enhanced with Google “Gemini Intelligence” AI. The pitch: a new “Googlebook” — a premium Chromebook that merges ChromeOS and Android into a single operating system, enhanced with Google “Gemini Intelligence” AI. An evolution from the original Chromebook introduced 15 years ago (originally ~$300-500 hardware that’s been hugely popular in schools). Echoes Apple Neo — the desktop Mac OS running on iPhone chip hardware that MP has written about. Googlebook will allow Android smartphone apps to be “cast” onto ChromeOS-based laptops by OEM partners. A step toward “Generative UI” — Gemini Intelligence running on the fly creates apps and widgets for you customized to what you’re doing. Vibe coding by the computer itself. Available this Fall; announced ahead of Google I/O later this week. Another innovation is Google DeepMind coming up with Gemini AI powered improvement of the 50+ year, humble mouse pointer. The reporting: ZDNet — Googlebook News: Premium Chromebook for Android. And: The Verge — Gemini Intelligence Android IO Autofill. Standing thesis on Apple’s supply-chain lock-in advantage, which Google can’t match: AI-RTZ #1010 — Apple Supply Chain Lock-In Tech.

MP Take: Google is emulating an Apple strategy WITHOUT Apple’s supply chain advantage. Will be a curiosity amongst Google Chromebook users, but faces a difficult execution path through Apple and Windows notebooks. For now it feels like various Google technologies are looking for cohesive application. But they’re useful nevertheless because they’ll inspire and catalyze scores of tech companies and developers around the world because it’s from Google. And that is the core positive thing here.

Bonus — today’s AI-RTZ companion #1084 covers Cerebras IPO + the AI Tech Wave ahead — a deeper read on this week’s Cerebras Systems IPO and where it fits in the broader six-box AI Tech Wave framework, picking up the thread from yesterday’s Ep 73 discussion.

Closing Questionss —

-

What’s MP’s favorite Chromebook feature? A fully Google experience across the ecosystem on an OS that keeps it all auto-updated. For 15 years the coolest feature of a Chromebook was simply that you could turn it on — typically $300-500 hardware — and the thing just ran. No drivers, no OS migration, no manual security-patch installs. MP’s mom had a Chromebook for years even though she couldn’t tell a computer interface from a hole in the wall — because the Chromebook just figured out the rest in Chrome OS. That’s the design lesson AI agents need to absorb.

MP Take: The magical experience of a Chromebook for the longest time was simply that you didn’t have to worry about how the computer worked or how the operating system worked. It just worked because you just used it like a browser. Same hope going forward for Googlebook with the fusing of the desktop and the smartphone — stepping in the right direction. Same hope for Apple Neo expanding the two systems and making this stuff a lot easier for mainstream users.

-

What’s MP’s biggest wish for a Chromebook feature? More desktop-computer features like local apps and data. When you wanted local data or applications that didn’t run on the web on a Chrome browser, that’s when you missed your computer. Rock and a hard place indeed. Googlebook’s pitch (ChromeOS + Android merged, with Gemini Intelligence) is essentially Google’s attempt to thread that needle — keeping the simplicity while adding the local-app horsepower.

MP Take: That has been the longest-standing wish for a Chromebook feature, I think, of most Chromebook users and Chrome OS users. We’re getting to a point — maybe not this year, but hopefully by next year — to where this stuff is a lot easier. It’s all very exciting for geeks like me, early adopters, gadget freaks. AI technology, probabilistic systems, deterministic systems — lots of stuff being experimented with.

The point of this podcast is to translate the excitement beyond the gobbledygook. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)

Clips from today’s episode

Short — Chromebook’s Real Magic — Software That Just Works For 15 years the Chromebook’s coolest feature has been the absence of computer hassle. Turn it on, the thing runs. No drivers, no OS migration, no manual patches. MP’s mom used a Chromebook for years even though she couldn’t tell a computer interface from a hole in the wall — because the Chromebook just figured out the rest in Chrome OS. That’s the design lesson AI agents need to absorb.

MP Take: The magical experience of a Chromebook for the longest time was simply that you didn’t have to worry about how the computer worked or how the operating system worked. It just worked because you just used it like a browser. Same hope going forward for Googlebook with the fusing of the desktop and the smartphone — stepping in the right direction. Same hope for Apple Neo expanding the two systems.

Short — AI’s Plane Analogy — Walk, Then Fly The framing that ties today’s AI UI/UX hunt together: companies are trying to figure out the best user interface so regular people can deal with this stuff. Otherwise it’s way too clunky. Users shouldn’t have to know a lot of techie things about how computers work — increasingly the cognitive load should be offloaded onto the computers because that’s what GPUs and CPUs are there for. This isn’t about when AI surpasses human intelligence. This is when AI does more interesting things than humans can do without it. Like walking at a certain pace — and then getting on a plane and traveling a lot faster.

MP Take: AI’s job is to offload cognitive load onto silicon. We’re giving these systems huge amount of GPUs and CPUs and memory to just do all this stuff for us. And that is the point. Walk, then fly. Three companies (TML, Anthropic+Karpathy, Anthropic Claude) all working on different pieces of the same UI/UX puzzle today.

Short — AI’s Castaway Volleyball — Companion by Design OpenAI’s voice modality was inspired by the movie Her — friendly personality, multiple voices, conversational warmth. The objective: make the AI more human. So leverage the human tendency to anthropomorphize anything that looks and feels human. We do that with our pets — half the population owns pets and has very deep relationships with them. We did it with the volleyball in Castaway — Tom Hanks just connecting to a volleyball. That’s what’s happening with our AIs. We’re treating them as companions. And that’s actually a business objective of these companies.

MP Take: AI-as-companion is a business strategy, not an accident. The warm personality, the voices, the friendliness — all calibrated to leverage how humans bond with anything that sits still long enough to listen. Worth knowing it’s a designed-in product feature, not an emergent property.

Short — Google’s Googlebook Bets on Chrome + Android + Gemini Google is launching a premium “Googlebook” this fall — a Chromebook successor that fuses ChromeOS and Android into a single OS, enhanced with “Gemini Intelligence” running on-device. Android smartphone apps will cast onto ChromeOS-based laptops by OEM partners. A step toward “Generative UI” — apps and widgets created on-the-fly by Gemini. Chrome is the #1 browser globally (60-70% share); the other is Safari on iOS. Announced ahead of Google I/O later this week.

MP Take: Google is emulating an Apple strategy WITHOUT Apple’s supply chain advantage. Will be a curiosity amongst Google Chromebook users but faces a difficult execution path through Apple and Windows notebooks. For now it feels like various Google technologies are looking for cohesive application.

About AI Ramblings Daily (ARD), and AI-RTZ

Both are daily. Both are free. Both are about AI. But they’re different mediums carrying different messages.

AI-RTZ is the morning text — a deeper written take on one idea, published by at least 5 AM EST. Today: post #1084 — Cerebras IPO and AI Tech Wave Ahead.

AI Ramblings Daily is the afternoon video + podcast — my ad hoc takes and perspective on the day’s AI issues & news flow, around 20 minutes today, with short 1-minute clips for quick topic views. Today: episode #74.

Subscribe to either or both on michaelparekh.substack.com. They run as separate Sections you can opt into or out of.

Links used in today’s show (already embedded inline above; listed here for reference)

Take 1 — Thinking Machine Labs · Time-Aware Voice-Powered AI Language Models:

Take 2 — Anthropic + Karpathy · HTML Output Beyond Markdown:

Take 3 — Anthropic Claude Agent View:

Gadget AI — Googlebook (ChromeOS + Android + Gemini Intelligence):

-

The Verge, ‘Generative UI’ from Google

-

The Verge: Google’s Googlebooks so far.

Q1 + Q2 — Chromebook today / Chromebook tomorrow

AI-Reset to Zero post from earlier today: