AI: Limiting AI with human intelligence in the mirror. AI-RTZ #1082

The Bigger Picture, Sunday, May 10, 2026

One of my fiercest beliefs for years here at AI-RTZ and AI Ramblings podcast (ARD), is the critical need in these early days of the AI Tech Wave to minimize anthropomorphizing AIs. It’s understandable that the leading LLM AI companies want to emphasize the ‘warmer’ human aspects of AI to sell their wares. But the Bigger Picture I’d like to discuss today is that it potentially limits the possibilities of machine ‘intelligence’ ahead. And shrouds it in premature fear and dread.

To put it in simpler words I’ve written fervently about, our AI community in particular, needs to stop humanizing our current work and progress with LLM AIs. To look at the future of AI with human intelligence in the mirror.

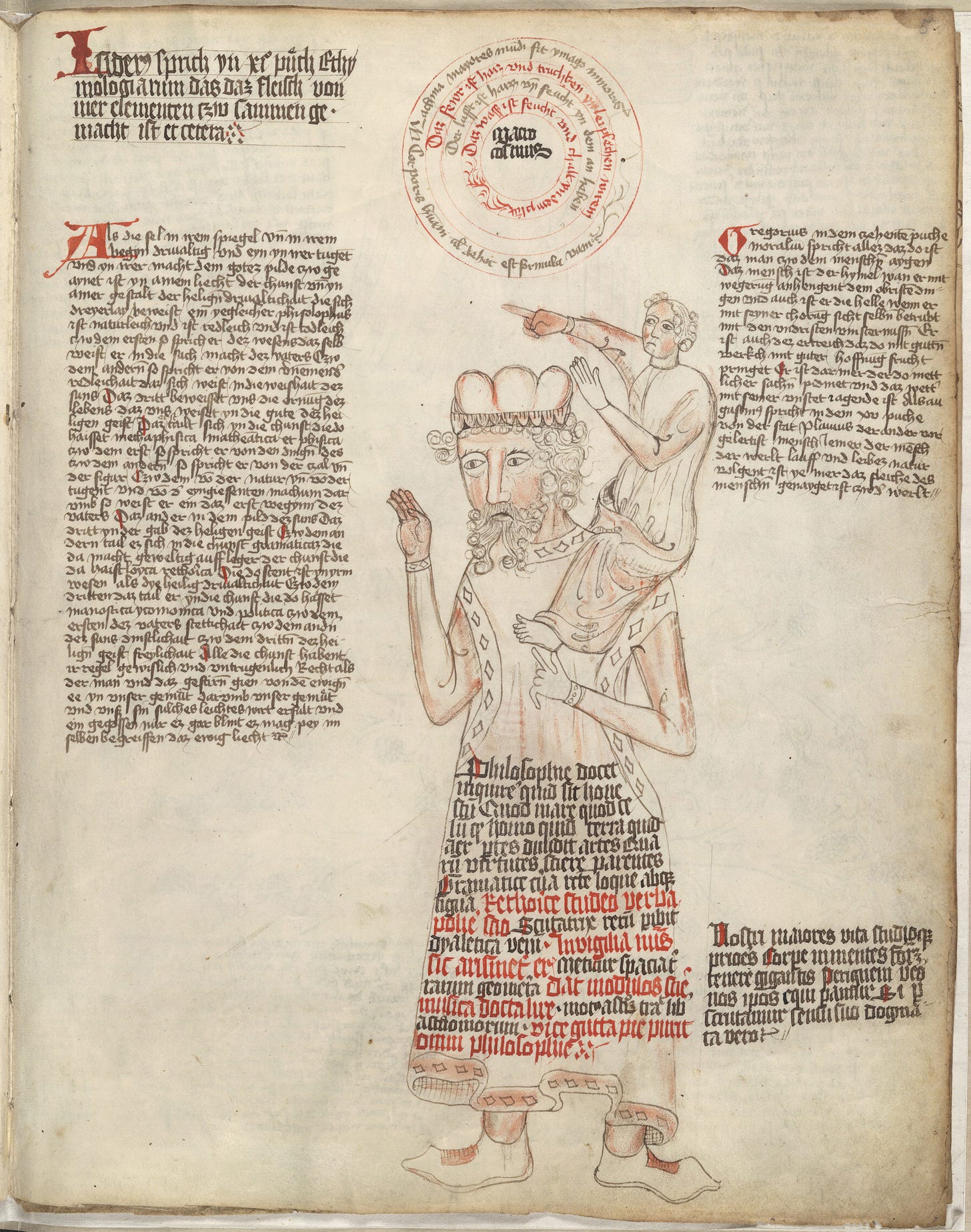

To be sure, I admire the capabilities of Generative AI to consume vast amounts of content and point to possible probabilistic meanings. OTSOG for machines as I’ve written with firm conviction. Also known as ‘On the Shoulders of Giants’, made famous by Isaac Newton.

The founders and chief AI scientists at these AI companies seem to believe the humanizing aspects of AI at their core. And that’s something to unpack again this Sunday in its possible implications.

Wired brings up the issue front and center in “I Am Begging AI Companies to Stop Naming Features After Human Processes”:

“Anthropic announced “dreaming” for AI agents to sort through “memories” at its developer conference. Can we not?”

“Anthropic just announced a new feature called “dreaming” at the company’s developer conference in San Francisco. It’s part of Anthropic’s recently launched AI agent infrastructure designed to help users manage and deploy tools that automate software processes. This “dreaming” aspect sorts through the transcript of what an agent recently completed and attempts to glean insights to improve the agent’s performance.”

“Folks using AI agents often send them on multistep journeys, like visiting a few websites or reading multiple files, to complete online tasks. This new “dreaming” feature allows agents to look for patterns in their activity log and improve their abilities based on those insights.”

To be sure, we’ve been doing this in earnest, from the beginning days of AI, pitting them against humans in games from Chess to Go and everything in between. A computer/human gladiator match from the beginning (Move 37 inclusive).

Then the author Reece Rogers gets to the crux of the matter, the scifi inspiration for all this ‘humanizing’ that’s already gotten us all so tangled up about AI:

“The feature’s name immediately calls to mind Philip K. Dick’s seminal sci-fi novel, Do Androids Dream of Electric Sheep?, which explores the qualities that truly separate humans from powerful machines. While our current generative AI tools come nowhere close to the machines in the book, I’m ready to draw the line right here, right now: No more generative AI features with names that rip off human cognitive processes.”

It’s a pretty clear line from there to the terrific Bladerunner movies about what the differences are between ‘replicants’ and humans. A trope scifi writers love scaring us with all the way to the Terminator and Skynet. And from there to the feverish AGI doomer fears that are tearing apart AI for over a decade now.

Anthropic of ourse is trying to add memory to our AI computer systems, a good thing in of itself.

““Together, memory and dreaming form a robust memory system for self-improving agents,” reads Anthropic’s blog post about the launch of this research preview for developers. “Memory lets each agent capture what it learns as it works. Dreaming refines that memory between sessions, pulling shared learnings across agents and keeping it up-to-date.”

But the core problem is that the process of AI innovation has deeply ingrained this humanizing trend into the AI computer scientists.

“Since the spark of the chatbot revolution in 2022, leaders at AI companies have gone full tilt into naming aspects of generative AI tools after what goes on in the human brain. OpenAI released its first “reasoning” model in 2024, where the chatbot needed “thinking” time. The company described this release at the time as “a new series of AI models designed to spend more time thinking before they respond.” Numerous startups also refer to their chatbots as having “memories” about the user. Rather than the fast storage that’s typically referred to as a computer’s “memories,” these are much more humanlike nuggets of information: He lives in San Francisco, enjoys afternoon baseball games, and hates eating cantaloupe.”

And it’s of course evolved to marketing, where the AI via AI and ‘superintelligence’ is poised to surpass humans in thought. Something we never worried about cars and planes and rockets surpassing humans in motion.

“It’s a consistent marketing approach used by AI leaders, who have continued to lean into branding that blurs the line between what humans do and what machines can. Even the ways these companies develop chatbots, like Claude, with distinct “personalities,” can make users feel as if they are talking with something that has the potential for a deep inner life, something that would potentially have dreams even when my laptop is closed.”

The company by its own admission revels in this humanizing:

“At Anthropic, this anthropomorphizing runs deeper than just marketing strategies. “We also discuss Claude in terms normally reserved for humans (e.g., ‘virtue,’ ‘wisdom’),” reads a portion of Anthropic’s constitution describing how it wants Claude to behave. “We do this because we expect Claude’s reasoning to draw on human concepts by default, given the role of human text in Claude’s training; and we think encouraging Claude to embrace certain humanlike qualities may be actively desirable.” The company even employs a resident philosopher to try to make sense of the bot’s “values.”

I agree with the author that this is way beyond word smithing.

“And this isn’t just me being nitpicky about wording. How we talk about these machines impacts what we think they can achieve. “As a fallacy, anthropomorphism is shown to distort moral judgments about AI, such as those concerning its moral character and status, as well as judgments of responsibility and trust,” reads a research paper published in the AI & Ethics journal. By not using more distanced language about bots, users run the risk of overly trusting the tools and projecting qualities onto them that aren’t really there.”

And spend time thinking deeper about the source material:

“Much like our AI overlords need to spend more time actually watching the sci-fi movies they allude to, I think the powerful people leading these companies should spend more time reading these classic sci-fi novels as well.”

“Near the end of Dick’s book, the protagonist returns to his apartment with a rare toad he is convinced is a living animal, until his wife proves it’s just a machine by flipping open the control panel. “Crestfallen, he gazed mutely at the false animal; he took it back from her, fiddled with the legs as if baffled—he did not seem quite to understand,” reads a passage from the novel. Similarly, tech leaders seem to be unable, or at least unwilling, to accept the limitations of their own inhuman tools.”

I’d actually go the other way too. Just using these AI technologies deeply and daily for years now, gives a quick reminder everytime how much these systems are closer to just computer systems than anything close to human.

I’d ask readers to just look at my two recent pieces on using the latest ‘AI Agents’ from Anthropic, OpenAI and others to get a feel for this reality.

Also to be clear, I don’t bring up the need of a sharp line between machine and human intelligence just on anthropormorphizing terms on the assumption that humans are the ultimate examples of intelligence. Just that they are DIFFERENT fundamentally.

Just like walking is to flying. And by focusing on human locomotion we likely would have missed jet engines and rockets and much more.

We are currently stuck on the definitional debates around intelligence like when physicists were debating what is a particle and a wave around light. It wasn’t until those rudimentary double-slit experiments with light. And a whole lot of theoretical and empirical work that led to amazing new directions in understanding the universe. Far more than the light that human eyes, with their limitations, could see.

It redefined our understanding of light. Much in the way today’s rudimentary machines are helping us push on what we think of as ‘intelligence’.

There is another metaphor that can be used as well. Without humanizing or anthropomorphizing AI.

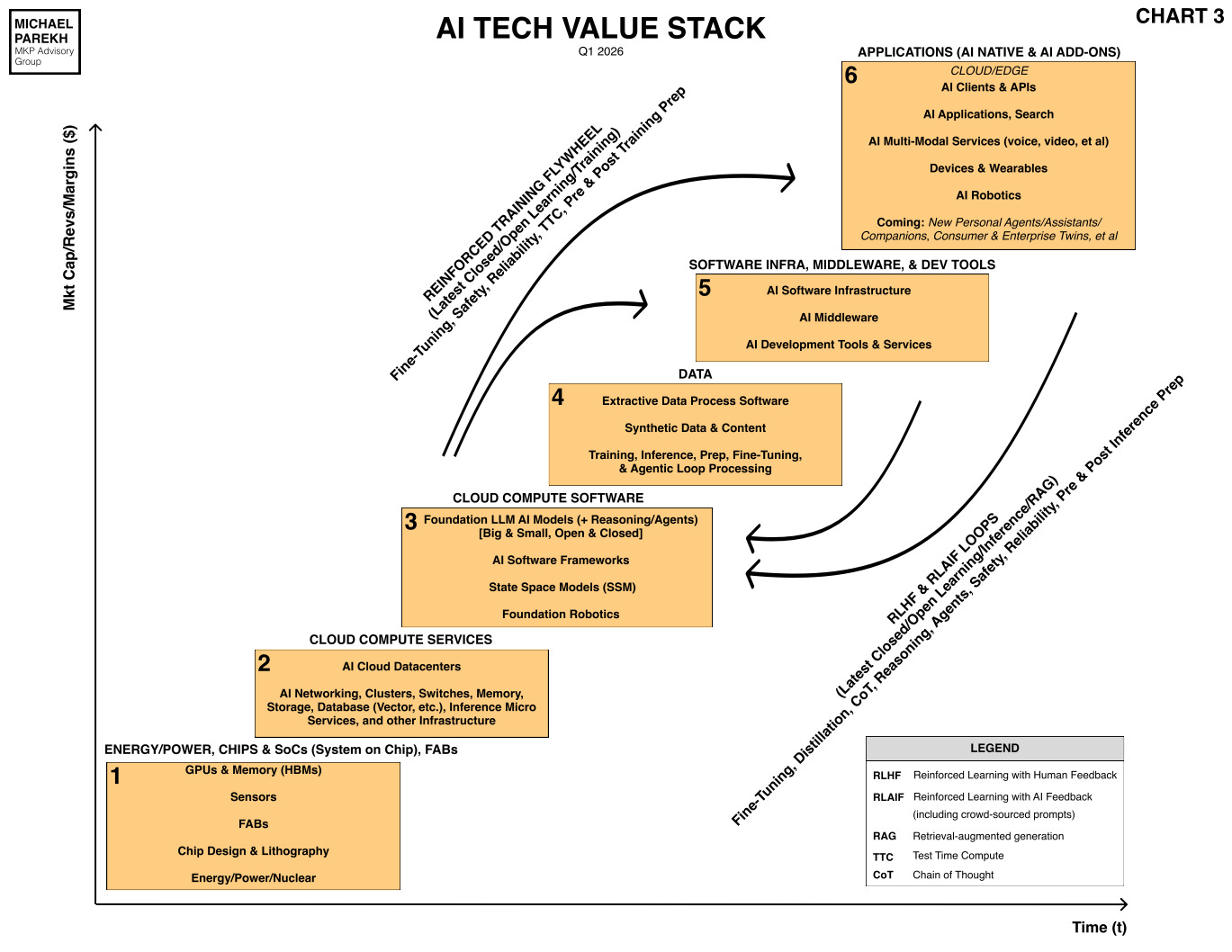

Our LLM AI systems are remarkable in how much further it can take us in processing our data and information (Box no. 4, ‘Data’, in the tech stack below).

In much the same way as the Wright brothers initial successes in 1903, were remarkable in redefining human motion. And it’s a long way from there to the Parker Solar Probe (2014) , the fastest human-made object ever built a 114+ years later. Zooming around the Sun at speeds of over 395,000 mph (635,000 km/h). We can revel in that accomplishment without fretting about that machine replacing humans.

In a similar way, we may be limiting our AI research by using words like ‘dreaming’ which applies to humans far more than do machines. Even when they’re operating (note, not ‘thinking’, probabilistically). Much less the hybrid deterministic/probabistic computing systems to come.

In the meantime, let’s hit the pause button dreaming about AIs dreaming.

And see if we can see farther on the shoulders of the power grunting machines. And do giant more work as humans with them vs against them. Taking OTSOG in a different direction altogether.

Without any anthropomorphizing or humanizing AIs at all. For that, we can always get a dog. Or a cat. As over half of us do for companionship and happiness.

That is the Bigger Picture I’d like to leave you with at this stage of the AI Tech Wave. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)