AI: Meta surfing to AI success. RTZ #527

Meta reported a strong quarter with continued AI momentum, growing its ‘Meta AI’. OpenAI ChatGPT and other competitors. There was momentum both in terms of further AI capex investments (almost $40 billion in 2024), and in terms of users of its AI products like ‘Meta AI’. (over 500 million off Meta’s 4 billion plus users of Instagram, Facebook, Messenger & WhatsApp).

As well as AI tools for its advertisers across its multi-billion plus global user base. That in itself a tale worth understanding, and I highly recommend Stratechery’s telling of that tale in ‘Meta’s AI Abundance’.

Meta continues to be the leading provider of open source LLM AI Llama 3+ models in various sizes. That itself is a big opportunity for Meta to expand its AWS type of opportunities across AI data centers by Amazon AWS, Microsoft Azure, Google Cloud and many more. It’s a strategy option I’ve discussed at length.

Of course investors continue to wait for ongoing evidence of returns from the ongoing AI investments by big tech companies, a topic I’ve covered at length in these pages. And my view continues to be that these returns will lag the investments, but the investments for now a critical line item for all of these companies to Scale AI to its ultimate possibilities.

There’s a lot of Meta items to digest in the above three paragraphs, including the links I’ve included.

Collectively they tell a tale of ample unique AI opportunities for Meta ahead. Differentiated from most of its peers and competitors. But none of it will be possible without Meta’s laser focus on the AI Compute infrastructure needed, with the AI Table Stakes for all big tech driving everyone to AI superclusters of 100,000 and more AI GPUs. Most of them from Nvidia of course.

With massively scaled multi-Gigawatt Power requirements, Nuclear or otherwise. Those AI GPU superclusters is what I’d like to focus on for the rest of this piece.

As expected, Meta founder/CEO Mark Zuckerberg remains laser-focused here. He continues to talk about building out Meta’s AI Infrastructure in terms of GPU superclusters to train, build and scale out its next generation LLM AI models. Far beyond version 3 of Llama today. As Wired explains in “Meta’s Next Llama AI Models Are Training on a GPU Cluster ‘Bigger Than Anything’ Else”:

“Meta CEO Mark Zuckerberg laid down the newest marker in generative AI training on Wednesday, saying that the next major release of the company’s Llama model is being trained on a cluster of GPUs that’s “bigger than anything” else that’s been reported.”

“Llama 4 development is well underway, Zuckerberg told investors and analysts on an earnings call, with an initial launch expected early next year. “We’re training the Llama 4 models on a cluster that is bigger than 100,000 H100s, or bigger than anything that I’ve seen reported for what others are doing,” Zuckerberg said, referring to the Nvidia chips popular for training AI systems. “I expect that the smaller Llama 4 models will be ready first.”

This business of Scaling AI with user Trust is of course the crux of the multi-hundred billion dollar race by multiple multi-trillion dollar market cap companies to ‘Scale AI’ at a pace that is multiples of tech’s venerable ‘Moore’s Law’ for over half a century:

“Increasing the scale of AI training with more computing power and data is widely believed to be key to developing significantly more capable AI models. While Meta appears to have the lead now, most of the big players in the field are likely working toward using compute clusters with more than 100,000 advanced chips. In March, Meta and Nvidia shared details about clusters of about 25,000 H100s that were used to develop Llama 3. In July, Elon Musk touted his xAI venture having worked with X and Nvidia to set up 100,000 H100s. “It’s the most powerful AI training cluster in the world!” Musk wrote on X at the time.”

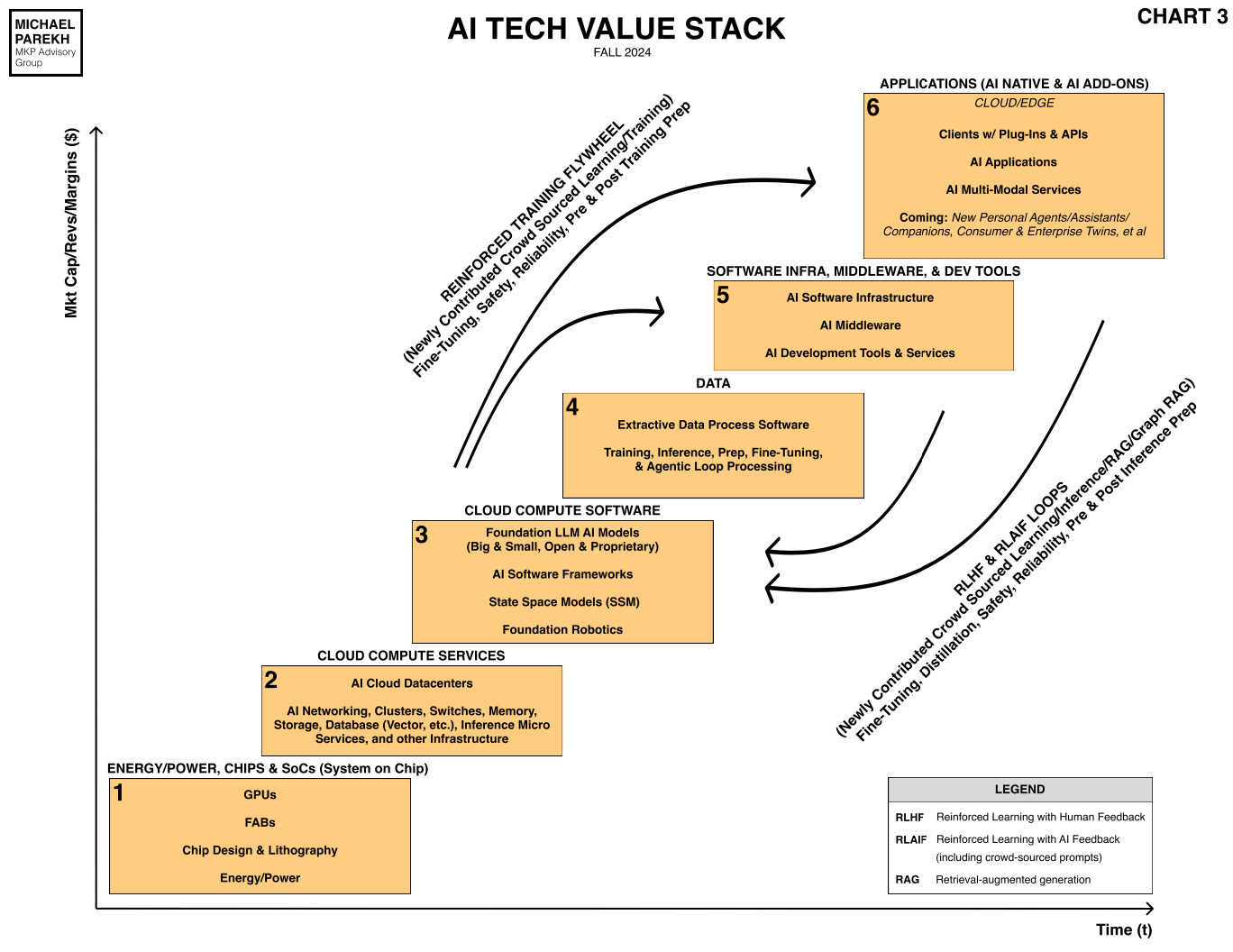

And of course, the use for these AI superclusters goes far beyond the basic training and inference loops that have driven LLM AIs in the AI Tech Stack chart below:

“On Wednesday, Zuckerberg declined to offer details on Llama 4’s potential advanced capabilities but vaguely referred to “new modalities,” “stronger reasoning,” and “much faster.”

And as I’ve outlined before, Meta’s approach is very different than its peers:

“Meta’s approach to AI is proving a wild card in the corporate race for dominance. Llama models can be downloaded in their entirety for free in contrast to the models developed by OpenAI, Google, and most other major companies, which can be accessed only through an API. Llama has proven hugely popular with startups and researchers looking to have complete control over their models, data, and compute costs.”

“Although touted as “open source” by Meta, the Llama license does impose some restrictions on the model’s commercial use. Meta also does not disclose details of the models’ training, which limits outsiders’ ability to probe how it works. The company released the first version of Llama in July of 2023 and made the latest version, Llama 3.2, available this September.”

And of course Meta has the same challenges in terms of the scope of these data centers and their power requirements, as any of their competitors:

“Managing such a gargantuan array of chips to develop Llama 4 is likely to present unique engineering challenges and require vast amounts of energy. Meta executives on Wednesday sidestepped an analyst question about energy access constraints in parts of the US that have hampered companies’ efforts to develop more powerful AI.”

“According to one estimate, a cluster of 100,000 H100 chips would require 150 megawatts of power. The largest national lab supercomputer in the United States, El Capitan, by contrast requires 30 megawatts of power. Meta expects to spend as much as $40 billion in capital this year to furnish data centers and other infrastructure, an increase of more than 42 percent from 2023. The company expects even more torrid growth in that spending next year.”

But Meta, like Google as I described yesterday, has ample resources to invest from its core advertising businesses:

“Meta’s total operating costs have grown about 9 percent this year. But overall sales—largely from ads—have surged more than 22 percent, leaving the company with fatter margins and larger profits even as it pours billions of dollars into the Llama efforts.”

And of course all the other hyperscalers are intensely focused on their next generation LLM AI models:

“Meanwhile, OpenAI, considered the current leader in developing cutting-edge AI, is burning through cash despite charging developers for access to its models. What for now remains a nonprofit venture has said that it is training GPT-5, a successor to the model that currently powers ChatGPT. OpenAI has said that GPT-5 will be larger than its predecessor, but it has not said anything about the computer cluster it is using for training. OpenAI has also said that in addition to scale, GPT-5 will incorporate other innovations, including a recently developed approach to reasoning.”

“CEO Sam Altman has said that GPT-5 will be “a significant leap forward” compared to its predecessor. Last week, Altman responded to a news report stating that OpenAI’s next frontier model would be released by December by writing on X, “fakes news out of control.””

“On Tuesday, Google CEO Sundar Pichai said the company’s newest version of the Gemini family of generative AI models is in development.”

Of course the open vs closed LLM AI approaches remain debated, a topic I view as one without distinction as the technologies blur over time:

“Meta’s open approach to AI has at times proven controversial. Some AI experts worry that making significantly more powerful AI models freely available could be dangerous because it could help criminals launch cyberattacks or automate the design of chemical or biological weapons. Although Llama is fine-tuned prior to its release to restrict misbehavior, it is relatively trivial to remove these restrictions.”

“Zuckerberg remains bullish about the open source strategy, even as Google and OpenAI push proprietary systems. “It seems pretty clear to me that open source will be the most cost effective, customizable, trustworthy, performant, and easiest to use option that is available to developers,” he said on Wednesday. “And I am proud that Llama is leading the way on this.”

And Meta has its own approach and plans for AI across its services:

“Zuckerberg added that the new capabilities of Llama 4 should be able to power a wider range of features across Meta services. Today, the signature offering based on Llama models is the ChatGPT-like chatbot known as Meta AI that’s available in Facebook, Instagram, WhatsApp, and other apps.”

“Over 500 million people monthly use Meta AI, Zuckerberg said. Over time, Meta expects to generate revenue through ads in the feature. “There will be a broadening set of queries that people use it for, and the monetization opportunities will exist over time as we get there,” Meta CFO Susan Li said on Wednesday’s call. With the potential for revenue from ads, Meta just might be able to pull off subsidizing Llama for everyone else.”

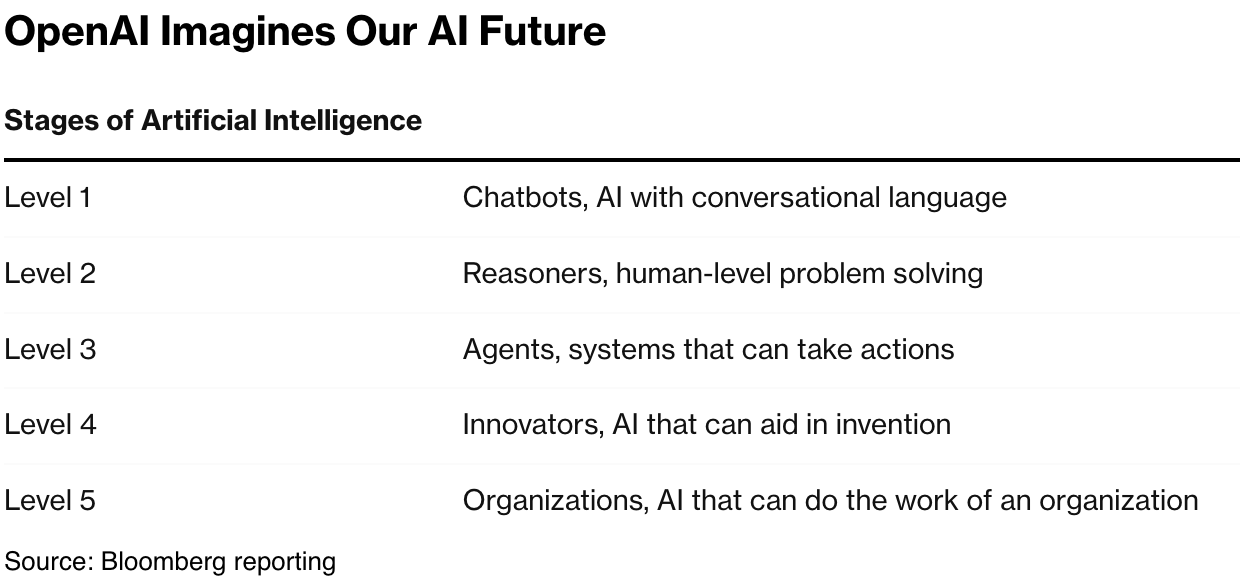

Everyone including Meta is following the OpenAI roadmap to AGI below:

Overall, Meta remains uniquely positioned in this AI Tech Wave relative to its peers. And in these early days, there is room enough for multiple players large and small to bring unique AI products and services to mainstream users at Scale. For now, ‘Zuck’ continues to ‘Surf On’.

It may take more investments and time than preferred, but the road ahead is set in key AI Infrastructure inputs. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)