AI: Microsoft embeds AI deep into Windows OS. RTZ #364

A few days ago, my friend Ram Ahluwalia and I were discussing how Operating Systems like Microsoft Windows and Apple MacOS/iOS/iPadOS etc., could eventually be optimized for Generative/LLM AI. And what that would look like. This week, Microsoft started showing us what could be done to start fusing AI Copilot with Windows operating system and the application layers, with ‘AI Copilot PCs’. I wrote about that yesterday in “Microsoft AI ‘Copilot PCs’ now to make Apple dance”.

Today, let’s discuss the AI that Microsoft is deeply embedding into Windows for the year to come. This was a key part of what Microsoft announced at its Build conference today. And how Apple is likely to announce similar moves at their upcoming WWDC developer conference on June 10. And why it’s all important for what type of AI apps and services mainstream users are about to try and use in the billions soon.

First let me unpack the broader conversation. A few days ago, Ram Ahluwalia of Lumida Wealth, in an almost two hour YouTube podcast with me on the subject of “Winners & Losers in the AI Race”, asked at the 55 minute mark:

“RAM: But do we really need an operating system in the way, as we conceive it today. You know, why isn’t of when will someone re-conceive the operating system, that’s AI first? Is that a risk to Microsoft?”

“ME: I would argue that ‘Windows is still Windows’. But because you have so much AI specialization at the application layer for now. But Microsoft is focusing on it. Apple is focusing on it. Anything they can augment or advance through AI functionality in their operating system layer, kernels, etc., they will definitely do”.

You can watch that discussion at the 55 minute mark, or the whole thing here.

Turns out, Microsoft’s been hard at work on AI deep in their operating systems and chips already. And their chip partners like Qualcomm as well.

Today at Microsoft’s Build Conference for Developers, a day after making a big splash with their ‘Copilot + PCs’ splash yesterday I wrote about, Microsoft walked through a whole series of AI initiatives where over 40 local AI small ‘LLMs’, or SLMs (‘small language models’, were deeply embedded into an ‘AI-rebuilt Windows 11’.

Additionally, these operating system level AI LLMs were in many cases, deeply enmeshed with specialized chips in the hardware of these new ‘AI PCs’, most specifically with the new Qualcomm Snapdragon Elite X chips, and Microsoft’s NPU chip (Neural Processing Units), running over 40 trillion operations per second, or TOPs. That’s likely the next metric we’ll be tracking when we buy our next PCs and laptops.

An interesting announcement amongst many here was what Microsoft with Copilot, is planning to do with their SLM Phi-3 language model, by embedding it into the silicon. As Venturebeat outlines in “Microsoft introduces Phi-Silica, a 3.3 billion parameter model made for Copilot+PC NPUs”:

“Microsoft is making more investments in the development of small language models (SLMs). At its Build developer conference, the company announced the general availability of its Phi-3 models and previewed Phi-3-vision. However, on the heels of Microsoft’s Copilot+ PC news, it’s introducing an SLM built specifically for these device’s powerful Neural Processing Units (NPUs).”

“A Microsoft spokesperson tells VentureBeat that what differentiates Phi-Silica is “its distinction as Windows’ inaugural locally deployed language model. It is optimized to run on Copilot + PCs NPU, bringing lightning-fast local inferencing to your device. This milestone marks a pivotal moment in bringing advanced AI directly to 3P developers optimized for Windows to begin building incredible 1P & 3P experiences that will, this fall, come to end users, elevating productivity and accessibility within the Windows ecosystem.”

“Phi-3-Silica will be embedded in all Copilot+ PCs when they go on sale starting in June. It’s the smallest of all the Phi models, with 3.3 billion parameters.”

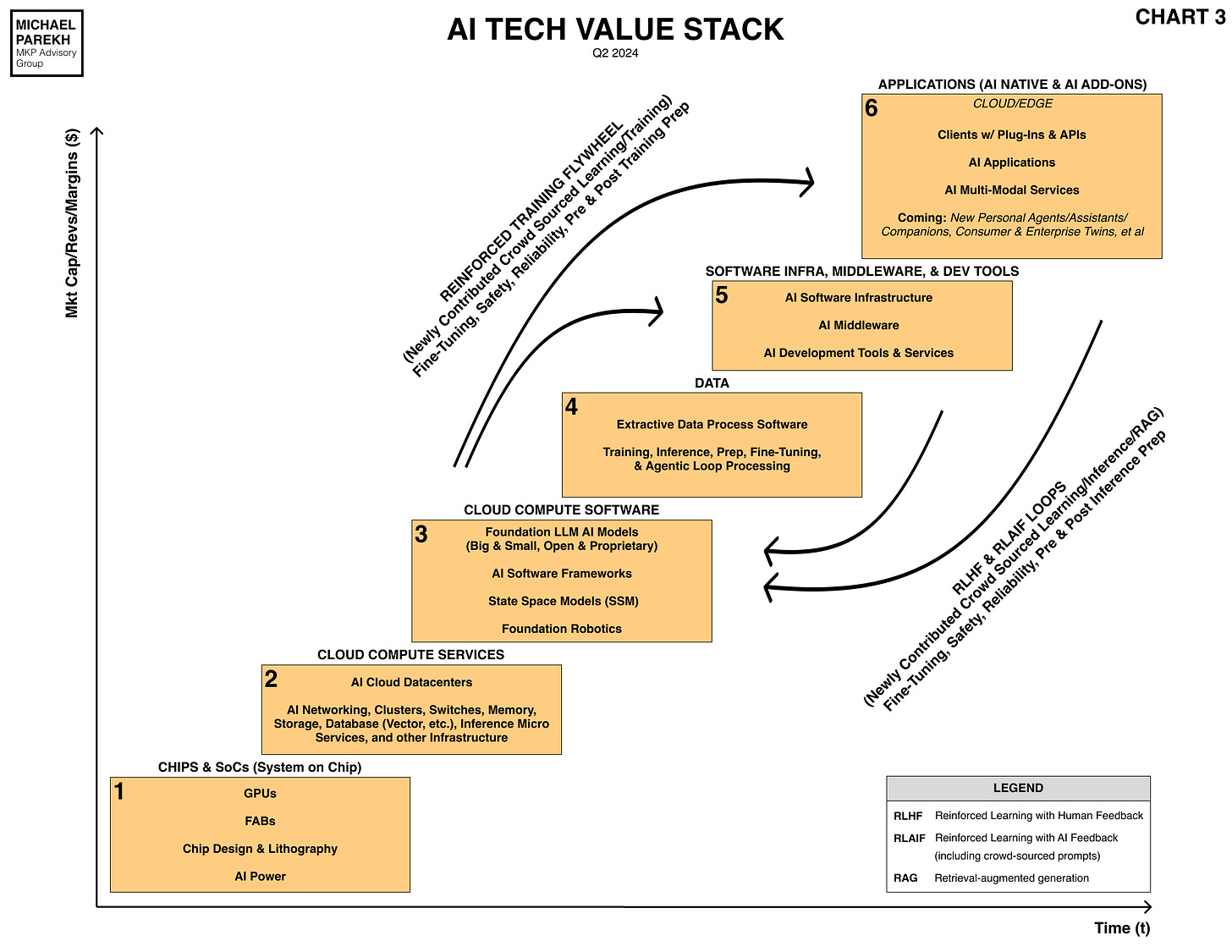

A lot of deep technical detail, on the promises and possibilties of AI to become deeply built into our PCs, their operating systems, and the applications above. The stuff in Box 6 below in the AI Tech Stack.

These 40 LLM AI models and the above Phi-3 SLM with its’Silica’ chips will be embedded in every one millions of new ‘AI PCs’ to be sold over the coming year, by Microsoft through its Surface brand, and their PC OEM partners like Dell, HP, Lenovo, Acer, Asus, Samsung and many others. Indeed, Yusuf Mehdi, Microsoft point person on these AI WIndows and hardware initiatives, made a point of noting that over 50 million of the ‘AI PCs’ are likely to be sold over the next twelve months.

For regular folks, it means useful AI features that haven’t yet been imagined and built. Stuff we’ll get to try soon. Especially after Apple shows us its plans with AI in a few days, after having been hard at work on their AI strategy for months now.

It’s going to be an interesting year for AI deep in the operating system and application layers in our applications this year and next. Running AI on Inference chips designed for local devices at the edge. Developers worldwide will build a lot of cool things on top of these AI operating system embeddings in the coming years. By Microsoft, Apple, and many others.

It won’t all happen at once, and likely will take longer than we’d all like. But it’ll be worth the wait in the AI Wave, just like it was in prior tech waves.

Ram of Lumida Wealth was on the right track in our podcast discussion above. Much more to come in these early days of the AI Tech Wave indeed. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)