AI: Peak AI Fears over economic and other bombs. RTZ #1012

The Bigger Picture, Sunday, March 1, 2026

This week’s AI news gave me reason to bring up one of the most unexpected charts (from last year), from an unexpected source, I’ve seen in my career as a tech analyst. Across over three decades of tech waves up and including this AI Tech Wave. On a topic top of mind for us all, the fears over AI. Recently over impact on software and other industry verticals. Not to mention CEOs across the country. And now in US Pentagon Politics. Making the cut for this Sunday’s Bigger Picture. Let’s unpack.

Core focus points are the two core fears of AI to date that I’ve discussed extensively: the ‘doomer’ existential implications of AGI/Superintelligence/Singularity to global humanity. And the potentially accelerated and cataclysmic job losses across white and blue collar work, as AI goes from office work to robot/self driving car work in the physical world.

The broader issue of existential fears of AI I’ve discussed for a long time here continue. Note that this is different from the fears on AI over jobs and the economy, which I’ve discussed before. The most recent events brought to mind a memorable chart from the Federal Reserve Bank of Dallas from last year.

The paper itself is almost a year old, and has a cheery and nondescript title in “Advances in AI will boost productivity, living standards over time”:

“Artificial intelligence (AI), like many technologies before it, offers the potential to improve people’s living standards. Such advances can be approximated by changes in gross domestic product (GDP) per capita over time—the rate of change in the amount of output per person.”

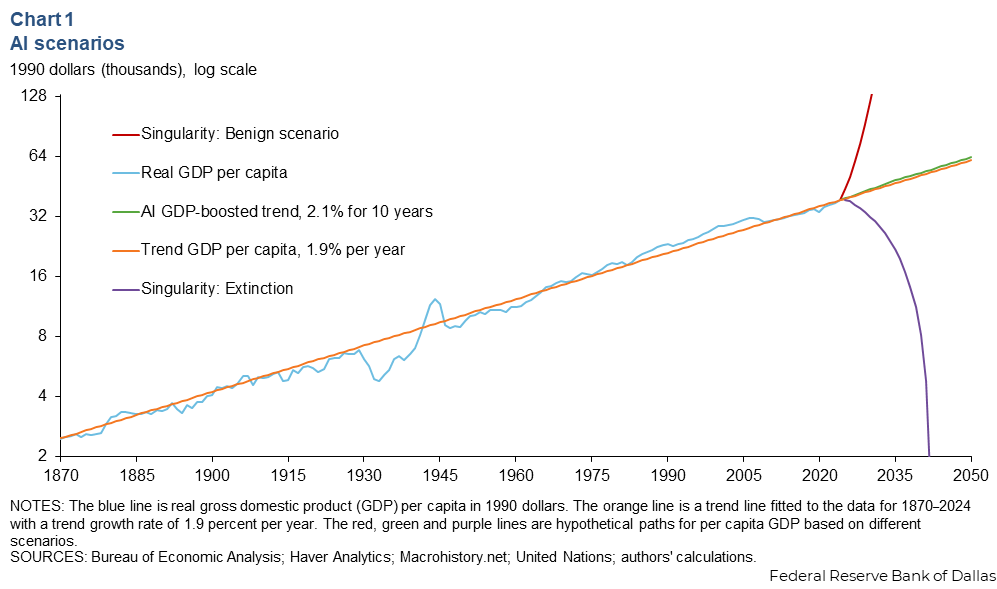

So far so good. But then it introduces this graph, that stops you mid-stride, especially given the heightened global fears over AI TODAY:

“As many other researchers have noted, what is remarkable over this 150-year-plus period is the relatively steady increase in living standards over time. Productivity growth is usually associated with both job destruction and job creation, although as we observed in a previous article, predicting the scale of these changes is challenging.”

Then the needed broader context for much of the previous century:

“U.S. GDP per capita has advanced at an annual rate of approximately 1.9 percent a year, despite two world wars, the Great Depression, the Great Recession and major technological advances (such as electrification, the internal combustion engine and computerization) that were viewed as at least as important in their day as the advent of AI is today. Furthermore, the single most important determinant of this steady improvement has been productivity growth.”

“Under one view of the likely impact of AI, the future will look similar to the past, and AI is just the latest technology to come along that will keep living standards improving at their historical rate. With this expectation, living standards over the next quarter century will follow something close to the orange line in Chart 1, extending past 2024.”

Then the meaty stuff on other possibilities with lower probabilities:

“However, discussions about AI sometimes include more extreme scenarios associated with the concept of the technological singularity. Technological singularity refers to a scenario in which AI eventually surpasses human intelligence, leading to rapid and unpredictable changes to the economy and society. Under a benign version of this scenario, machines get smarter at a rapidly increasing rate, eventually gaining the ability to produce everything, leading to a world in which the fundamental economic problem, scarcity, is solved. Under this scenario, the future could look something like the (hypothetical) red line in Chart 1.”

Then the dramatic, careening “Singularity Extinction” purple line in the chart above.

“Under a less benign version of this scenario, machine intelligence overtakes human intelligence at some finite point in the near future, the machines become malevolent, and this eventually leads to human extinction. This is a recurring theme in science fiction, but scientists working in the field take it seriously enough to call for guidelines for AI development. Under this scenario, the future could look something like the (hypothetical) purple line in Chart 1.”

Then an attempt to push down our inclination to jump off a balcony before 2042 or thereabouts above as AI Agents and the like take over:

“Today there is little empirical evidence that would prompt us to put much weight on either of these extreme scenarios (although economists have explored the implications of each). A more reasonable scenario might be one in which AI boosts annual productivity growth by 0.3 percentage points for the next decade. This is at the low end of a range of estimates produced by economists at Goldman Sachs. Under this scenario, we are looking at a difference in GDP per capita in 2050 of only a few thousand dollars, which is not trivial but not earth shattering either. This scenario is illustrated with the green line in Chart 1.”

I bring up all this because Anthropic, the second most important US AI company is in the throes of a political dispute with the Trump Administration and the Pentagon/DoD led by Pete Hegseth. The AI issues are nuanced, and involve all the leading LLM AI companies.

It’s an existential AI tussle, with big implications for silicon valley, over the possible use of Anthropic technologies for mass surveillance and/or automated AI killing weapons systems without humans in the loop. You know, 1983 War Games, or 1984 Terminator Skynet style.

This while recent AI wargames ended up LLM AIs choosing tactical nuclear weapon options over 95% of the time.

These are a dramatic extension of the existential fears in the Dallas Fed chart above. Over how AI governs the interaction of Anthropic with its industry leading AI technologies vs the Pentagon, where the DoD wants a freer hand in the use of that technology.

And despite OpenAI’s accommodations with the Pentagon and CEO Sam Altman’s offer to use their agreement as a template for their peers, this issue is far from resolved.

These are extraordinary topics and debates of our day.

And none of them existing to these levels in any regular tech waves over the last three decades like the PC or the Internet. Perhaps Social and Smartphones a little bit.

But it speaks to the bigger questions that go beyond technology and need all our collective attention. Over technologies that barely exist, but could exist at the impact scale feared above. Be it the Dallas Fed or the DoD. And OpenAI’s efforts to help industry agreement. And Anthropic’s choices despite best intentions.

And that is a Bigger Picture worth pondering a bit at this point in the AI Tech Wave, on a Sunday. While of course the US and its ally Israel is attacking Iran on an unrelated, non AI geopolitical, political matter. And apparently used Anthropic AI in the mix. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)