AI: The supply crunch frustrations of getting more AI for less. RTZ #1045

History does repeat and/or rhyme as they say. Especially as tech waves and booms really get going. The 1990s saw an acute shortage of Internet dial-up modem network capacity for America Online (AOL), Microsoft Network (MSN) and others. As millions of eager mainstream folk tried to get online daily to check their email and messages.

As the Internet Equities Analyst at Goldman Sachs back then, I helped finance the IPOs and secondaries of TCP-IP network capacity providers by UUNET ISPs (Internet Service Providers) and others. They needed hundreds of millions in capex investments to build their networks.

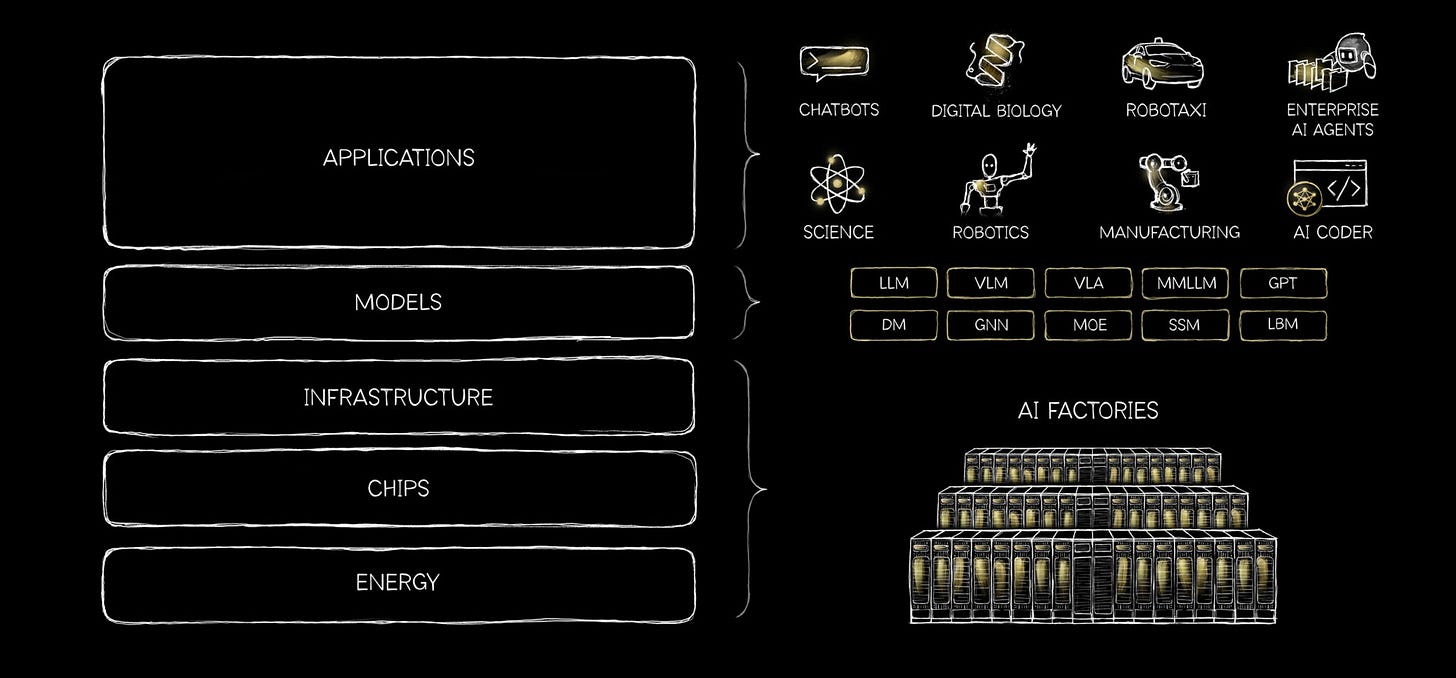

Add a few zeroes, and we have the new AI Compute shortage reality in this AI Tech Wave. A topic I’ve discussed at length. And already a global supply chain headache for leading LLM AI companies like OpenAI and Anthropic. As they both race towards mega-IPOs later this year, a few months after Elon Musk’s SpaceX/xAI, with its own Grok LLM AI products.

So what we have is a shortage in AI Compute networks and the critical tokens, that’ll last a few years. Despite the hundreds of billions being expended in AI Data Centers and their Power.

Axios cover this well in “AI’s Compute Wars”:

“Anthropic’s runaway success is exposing AI’s core problem: compute costs.”

“Why it matters: The closer AI labs get to IPOs, the harder it becomes to hide a structural margin problem: The more customers they win, the more they spend on the compute to serve them.”

It’s the high class problem of variable costs with rising mainstream demand.

“State of play: Anthropic’s server capacity isn’t keeping pace with demand, leaving paying customers stuck on usage limits and outages.”

“Server capacity and compute power are finite resources that AI labs often have to purchase before they know how much demand they’ll have from customers.”

“Buy too much expensive capacity, erode your margins. Buy too little and you can’t meet customer demand, and they’ll run to your competitors as a result.”

It’s a tough dilemma to manage through, especially at the current exponential AI scales:

“What they’re saying: Anthropic CEO Dario Amodei said there’s “no hedge on earth” against overbuying compute. Buying too much would bankrupt the company if demand falls short.”

“Dylan Patel of SemiAnalysis warned Anthropic may be pushed toward lower-quality compute as OpenAI locks up premium supply.”

“When Anthropic capped usage during peak hours, OpenAI said it would double limits.”

“Amodei has signaled he’d rather lose customers in the short term than overbuy compute and torch his margins.”

The issue of course is the dual strain of AI compute needed for internal and external purposes:

“Between the lines: Compute isn’t just fueling customer usage. Labs also need it to train upcoming models.”

“Anthropic schedules training around peak hours to reduce costs, according to a source familiar with the matter.”

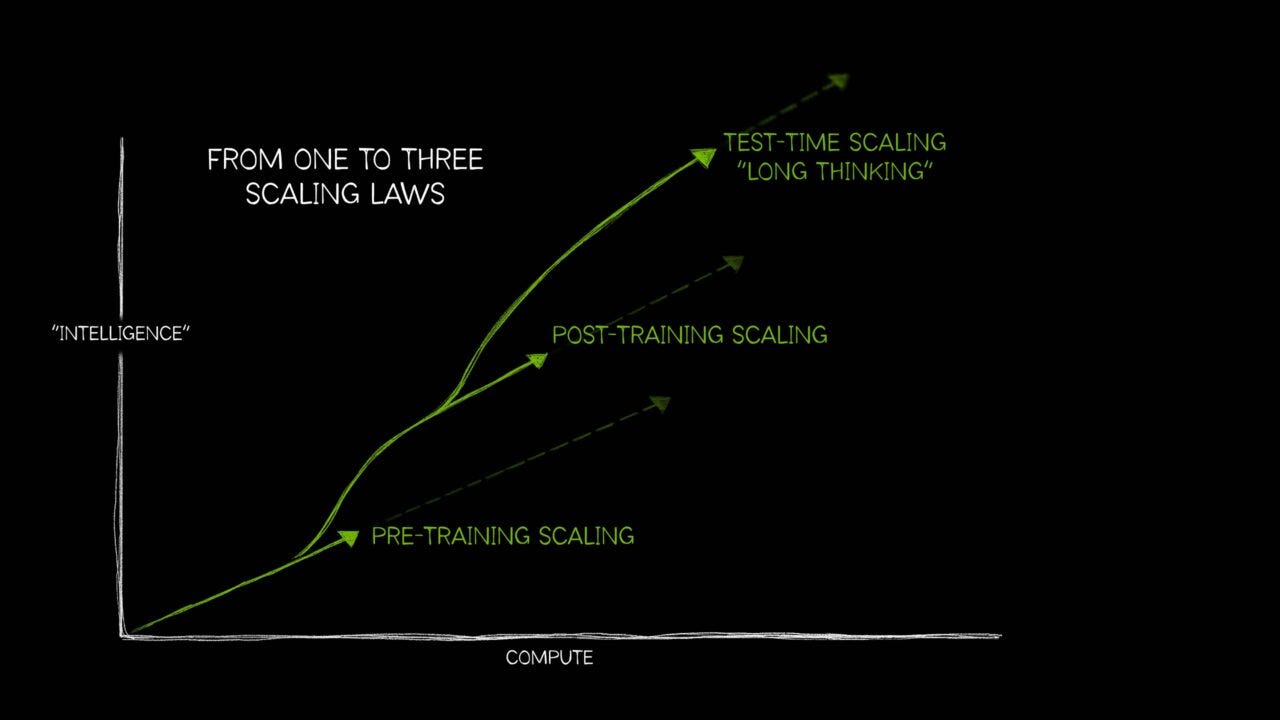

“Always watch the compute, other things matter, but any new capability breakthrough probably came from throwing more compute at it,” Peter Gostev, AI capability lead at Arena AI, wrote on X.”

Good news of course is that AI compute costs come down as efficiencies go up at scale:

“Yes, but: Compute costs are plummeting as efficiency in chips and software increases.”

But then there’s more use, as more people want in on a good thing:

“But usage is skyrocketing faster so total spending keeps climbing: Classic Jevons Paradox.”

“Zoom out: AI capex from the hyperscalers is expected to hit nearly $700 billion this year as they race to build capacity.”

“Some of that money goes toward future capacity — data center leases, power contracts — and maintaining existing systems, rather than raw compute.”

“Translation: Even at record capex levels, the industry isn’t buying enough compute to meet full demand.”

And that is causing the big debates for investors:

“Follow the money: While that could dissuade developers in the tech world, it has the opposite effect on Wall Street.”

“Anthropic proved its spending discipline while OpenAI spent ferociously on compute, which is now resulting in less demand for shares in Altman’s company, according to Bloomberg.”

“The bottom line: The AI race looks less like a model competition and more like a capital allocation problem — and the winners are still TBD.”

So expect frustrations amongst mainstream users this AI Tech Wave, as we all try and do more with AI. And the bills that go up with use.

Being frustrated by the intermittent access at times, and certainly the variable cost bills at the end of sessions. Despite at times upgrading to higher subscription tiers from $20/month to $200/month and beyond.

We’ve all been there before, and will be there again this time. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)