AI: Weekly Summary. RTZ #1046

-

Microsoft bundles OpenAI & Anthropic into a new Copilot tier: Microsoft is leveraging its unique equity and business partnerships with both OpenAI and Anthropic. They’re bundling both LLM AIs into a new Copilot ‘Critique’ capability that uses one model to check and improve the results of the other. The offering will be a part of the new $99 Copilot offering for 450+ million Microsoft Office 365 users worldwide. Only a sub 3% of those pay for the AI Copilot services. Microsoft hopes to accelerate Copilot AI add ons with new features like Critique. The current data shows lots of enterprises trying out the various Copilot AI capabilities, with broad and deep deployments with its own AI models still on the come. This is also being seen at other software vendors. Particularly as customers are also dealing with the increasing AI Compute crunch as the hyperscalers work hard on building multi-gigawatt AI Data Centers and the power supplies to ramp them up (see next). Microsoft continues to prune its cloud and sales groups around these efforts. Separately, Microsoft also moved Copilot from AI Chief Mustafa Suleyman, a $650 million acqihire two years ago, to now heading up Microsoft’s ‘Superintelligence’ efforts. Ironically, OpenAI a few days ago moved senior exec Fidji Simo from ‘CEO of Applications’ to ‘AGI Deployment’. Whenever that transpires. More here.

-

AI Compute Crunch worsens: OpenAI, Anthropic and others have the high class problem of seeing higher demand for AI compute, as customers try more AI capabilities and tiers. The problem of course is ramping up the AI infrastructure, with both data centers and power into the multi-gigawatts. The average gigawatt AI data center costs over $50 billion, and takes 2-3 years at least to bring online. Especially through rising supply constraints for everything from memory, to AI talent, to now helium due to the US war with Iran. The leading AI providers are all rushing to provide higher tiers of AI access for $200/month or more, a 10x jump from the basic $20/month most offer to start things off. Despite the pricing and supply ramps, users are still frustrated by intermittent compute services as they pay higher prices. The situation echoes the early internet era, when consumers rushed into dial-up internet services like AOL, MSN and others. This time it’s all got exponentially more zeroes for all stakeholders. As I discussed on Stocktwits TV this week, we have a period of high demand for slowly ramping AI compute infrastructure. Much like AOL in the mid 1990s.. More here.

-

US AI Energy ramp tougher vs China: This week saw an energy industry CERAweek conference in Houston, that brought together the big tech/AI company and energy industry executives together. The topic of course is the fast ramping power demands for AI Data Centers, and the expanded grid to support it all. All of this has to be done under a dynamic regulatory regime that spans local, state and federal entities and their priorities. The Trump administration is of course taking a keen interest here, trying to align the priorities of the tech/AI and power industry players. It’s a difficult ballet to put together, particularly given the historically different rates of improvements of both industries of work over decades. Now the energy crunch is seeing a far more aggressive tech industry explore creative ‘behind the meter’ power ramp up strategies. The energy sources cut across the spectrum, from natural gas to nuclear to some forms of renewables despite administration resistance on that front. All while China continues to ramp up its AI related power and energy needs far more aggressively across the supply spectrum. More here.

-

US AI Token Race sharpens vs China: The power demands above, along with the AI data center ramps are of course to ramp up the AI intelligence tokens that are generated both for AI training and inference and everything in between. The tokens are used for both input and output queries. And they’re exploding in volume as AI applications grow from AI chatbots to Ai reasoning to Ai Agents and beyond. China of course is also focused on this dynamic and by some measures it is doing better vs the US in ramping up its capability. The industry is seeing more efficiencies in manufacturing these AI tokens, especially as leading infrastructure providers like Nvidia ramp up their CPU/GPU/memory infrastructure from the current Blackwell series to the coming Vera Rubin and beyond. And competitors like AMD, ARM and others are also ramping up their products to produce and process far more intelligence tokens. A race that will be continued in earnest through the decade. AI Tokens to the Internet going forward, is like Oil has been for the world for over 125 years (CORE long-term book recommendation, The Prize, by Daniel Yergin). More here.

-

SpaceX, OpenAI, Anthropic mega-IPOs to be force-fed into Passive Stock Indices: There are plans afoot to accelerate the entry of the three mega AI IPOs this year, SpaceX/xAI, OpenAI and Anthropic, into the major passive mutual fund and ETF investment index structures. The expected $3 trillion of cumulative valuation of these companies will be accelerated into these indices in days instead of the months and years of seasoning that are typically required. In addition, SpaceX in particular, is negotiating for early lockup release for its preferred investors into the vast pools of public passive equity demand. The assets that are collected in these pools are measured in the hundreds of billions and trillions, creating a non-fundamental flood of money that is forced to buy into these offerings regardless of the investment fundamentals. This is a situation a bit reminiscent of the 2008 debt crisis ratings agencies that allowed the CDO investment crazy to balloon with misrepresented investment grades. Regardless of the AI fundamental investment opportunities, this is a development that would be better for all if it did not happen. These mega IPOs for their companies, as good as they may be for investors long-term, should NOT be force-fed into trillions in passive investment funds. Like Geese with Gavage, for Foie Gras. More here.

Other AI Readings for weekend:

-

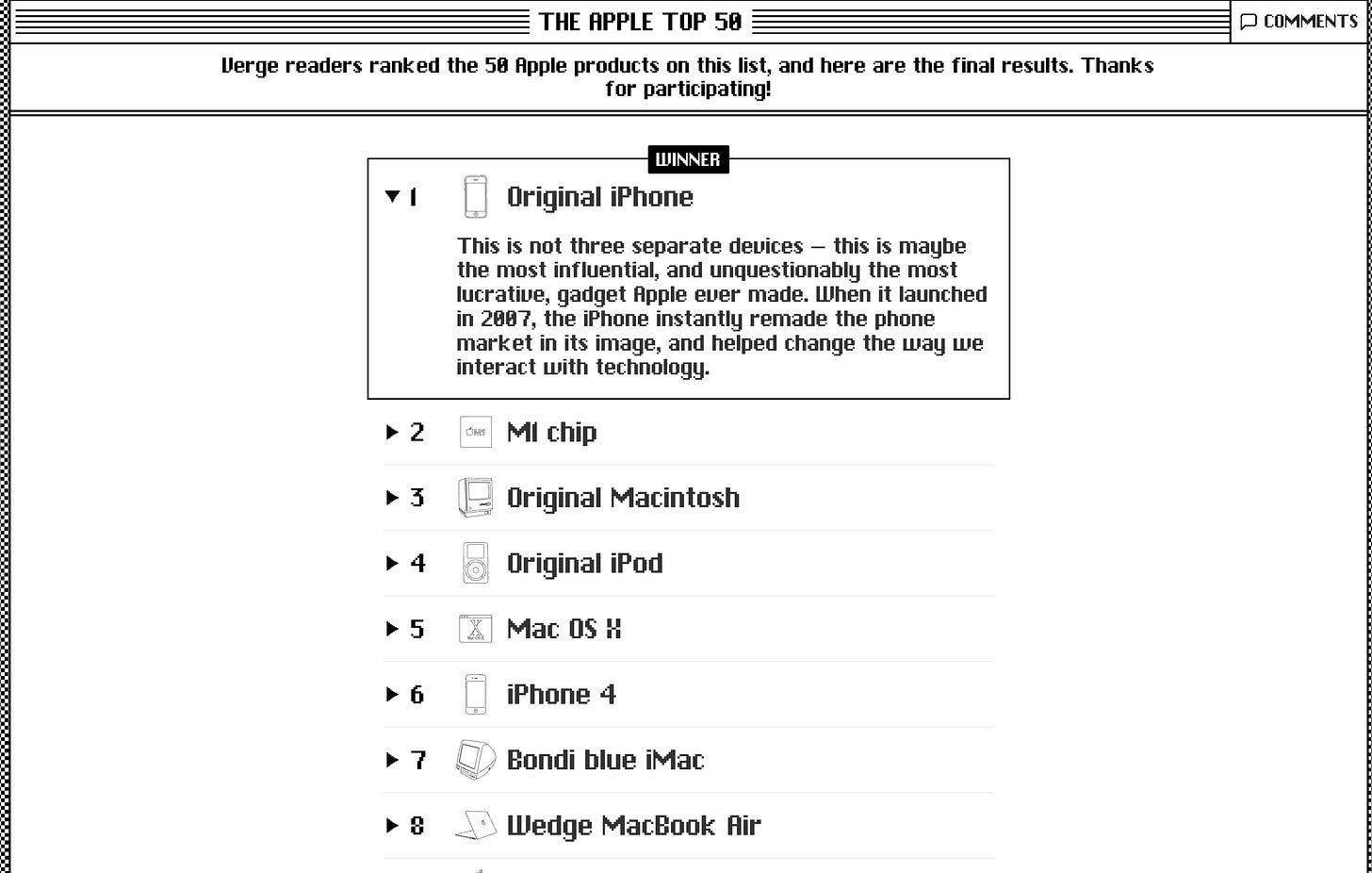

Apple turns 50 through unique design & supply chain innovations. The Verge has a timely ranking of selected fifty iconic contributions by Apple to global life today. Spoiler Alert, the iPhone, introduced iconically by Steve Jobs in 2007, of course wins by a mile. Across 1.6+ million votes. Worth a weekend peruse. More here.

-

Startling announcement from OpenAI, as it 2 year old, $5 million revenues, tech podcast TBPN for ‘low hundreds of millions’, as it moves away from ‘side quests’. A few months from its mega-IPO. More here.

(Additional Note: For more weekend AI listening, here’s the latest AI Ramblings Episode 48 on topical items. This week, a deep dive on Nvidia’s accelerating AI Kingmaker Role, & More):

Up next, the Sunday ‘The Bigger Picture’ tomorrow. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)