AI: Anthropic & OpenAI's 'Velvet Rope AI' upgrade Drift. RTZ #1064

The next LLM AI upgrades from Anthropic and OpenAI, Mythos and ‘Spud’ may be heading for a very different marketing strategy vs most tech waves at this Power-short AI Compute driven stage.

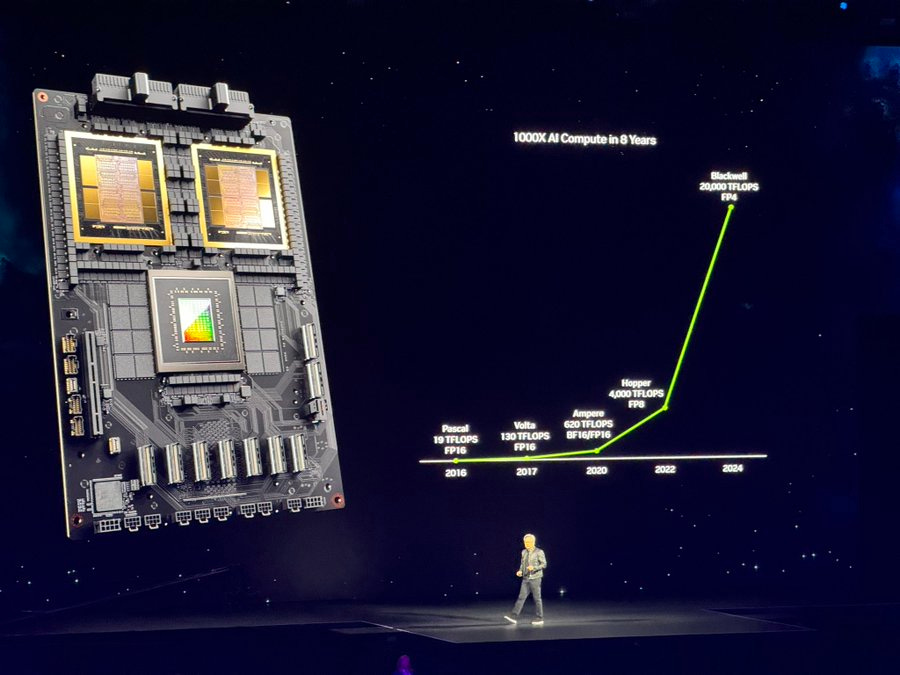

Due to security evaluation issues with the latest models, AND the higher variable Compute costs, the companies seem to be drifting into what I’d call a ‘velvet rope’ distribution strategy for its top customers who can pay without flinching at higher variable pricing.

Axios explains this emerging landscape in “Anthropic’s AI downgrade stings power users”:

“Anthropic users across online forums are raising the same complaint: Claude suddenly feels … bad.”

“Why it matters: The backlash lands just as Anthropic is testing a more powerful model, Mythos — raising questions about whether cutting-edge AI is becoming less accessible even as it gets more capable.”

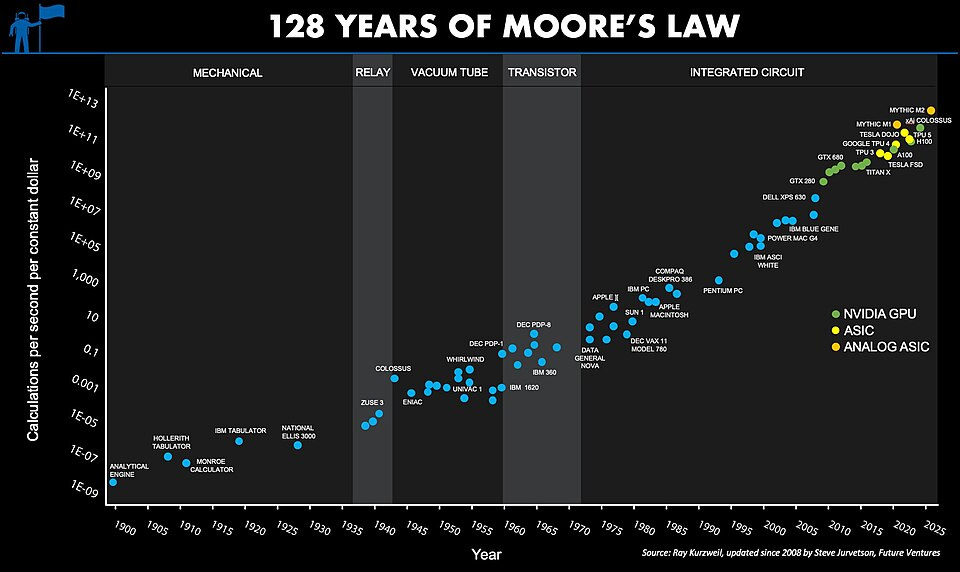

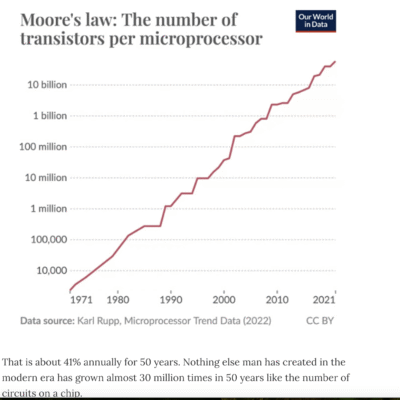

This is very counter-intuitive in the traditional world of ‘Moore’s Law’ driven tech. Long before it even got termed that way with baked in cost and benefit expectations. Where the very best is supposed to get more affordable AND accessible. Not go the other direction.

“Driving the news: Over the past few weeks, users on X, GitHub and Reddit have been swapping anecdotes, benchmarks and prompts in an effort to pinpoint what changed and why.”

“Claude has regressed to the point it cannot be trusted to perform complex engineering,” an AMD senior director wrote in a widely shared post on GitHub.”

“Others have posted side-by-side outputs and benchmarks they say show Claude generating answers that are less accurate or nuanced.”

“Much of the speculation centers on whether Claude has been deliberately scaled back — what users are calling “nerfed“ — either to control costs or to redirect scarce compute toward Mythos and other frontier efforts.”

I can personally attest to anecdotally feeling a bit less enthused about Anthropic’s latest Opus 4.7 top model vs Opus 4.6. Using Claude Cowork. Which itself is built on top of its industry-busting Claude Code. And I’m far from a top tier, advanced user of these wares.

“The other side: Anthropic says it adjusted the default level of reasoning in Claude Code, but denies the changes were tied to compute constraints or Mythos.”

“When asked about the online complaints, Anthropic pointed Axios to a post on X from Boris Cherny, head of Claude Code, from March 6.”

“You can change it anytime in the /model selector if you prefer low effort (faster) or high effort (more intelligence). The setting is sticky and will persist for your next session,” Cherny said.”

“Between the lines: Analyst Patrick Moorhead decided to ask Claude to weigh in.”

“Anthropic made real configuration changes that objectively reduced default thinking depth across all surfaces including claude.ai, but the most extreme ‘secret nerfing’ narrative overstates what happened,” Claude said as part of its lengthy response.”

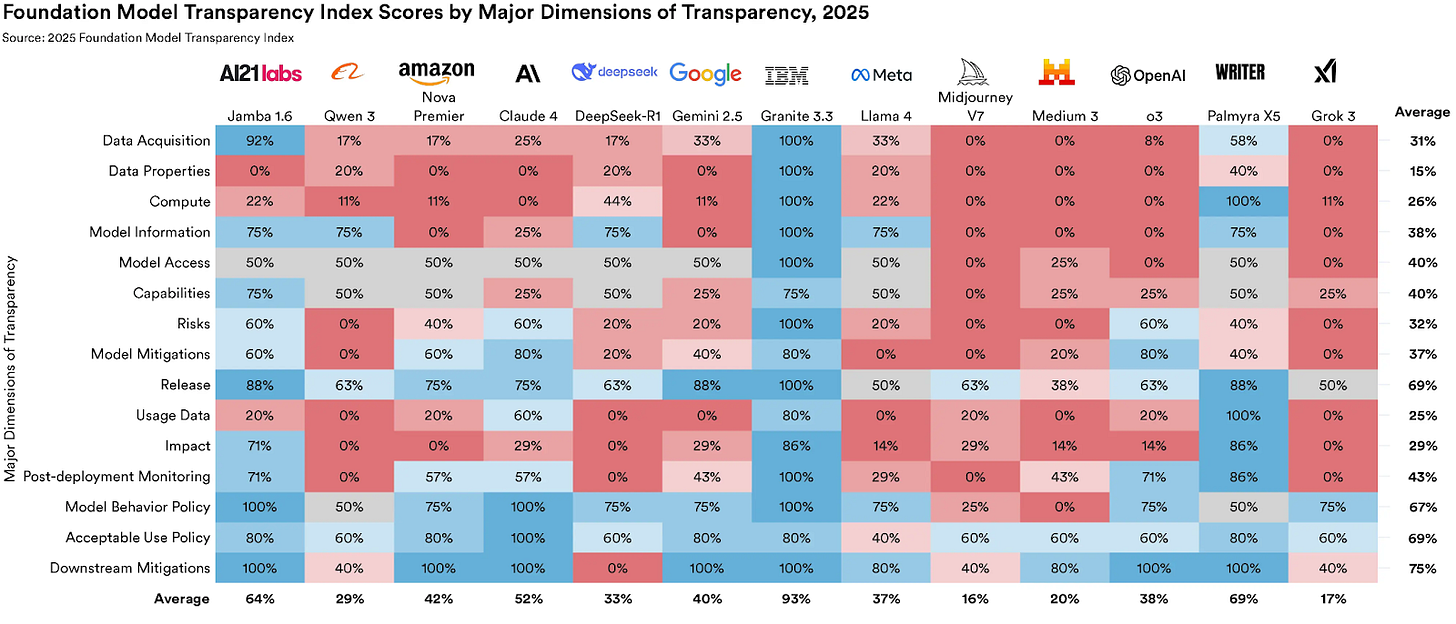

Part of the difficulty is what I’ve described before as the ‘opacity’ of LLM AI models today. Declining transpareity by the day.

The fundamental difficulty of understanding what’s the ‘latest and greatest’ in the most recent upgrade from just the numberical upward shift in the product number.

“Another theory is that users aren’t seeing decline so much as acclimating to what previously felt magical.”

“Over time, expectations rise and flaws become more noticeable — a phenomenon known as habituation.”

“Yes, but: Even if the change is explainable, the perception problem is real — especially for power users relying on consistent performance for coding and research workflows.”

The reality is probably somewhere in the middle. The models can do more for the most advanced users, and they can see it over time. But they’ve also got to be paying high, variable ‘tokenmaxxing’ prices for the underlying AI Compute to see those benefits.

“The big picture: The fight over Claude’s “intelligence” points to a broader shift: access to top-tier AI is fragmenting.”

“Advanced capabilities are increasingly gated behind higher-cost tiers, API usage or experimental programs.”

“Anthropic is also reportedly close to upgrading its high-end Opus model to version 4.7.”

Thus the current marketing challenge for the top model companies. Ahead of upcoming mega-AI IPOs later this year.

“The increasing stratification could lead to a division between those who can afford to pay top dollar for the best models and those who can’t.”

“Anthropic recently moved large enterprise customers to a fully usage-based (token) pricing model, tying intelligence more directly to spend.”

“It’s also reinforcing a widening divide between power users and dabblers around AI’s capabilities.”

“What we’re watching: Whether “default” AI experiences continue to get worse even as frontier systems get dramatically stronger.”

For now, the revenue ramps are up and to the right for both Anthropic and OpenAI. So there’s time for product design and marketing adjustments.

But the customers behind the ‘velvet ropes’ this AI Tech Wave, are fidgeting and starting to grumble. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)