AI: Anthropic's AI Compute Constraints throttling growth. AI-RTZ #1080

Regular readers and listeners here at AI-RTZ have seen my posts on how Anthropic have been racing past OpenAI of late. Led by the global developer and enterprise popularity of its Claude Code and recently Claude Cowork AI applications.

It’s driving growth seldom seen in any tech wave to date, AI or otherwise. We got some more color on it from founder/CEO Dario Amodei at Anthropic’s Code with Claude developer conference this week.

The New York Times lays it out in “Anthropic’s C.E.O. Says It Could Grow by 80 Times This Year”:

“The chief executive, Dario Amodei, said the rapid growth had exponentially increased the start-up’s need for more computing power.”

That’s something we’ve known for a while, and discussed at length. For both Anthropic and its sibling company OpenAI. The NYTimes continues:

“Dario Amodei, the chief executive of Anthropic, said on Wednesday that his artificial intelligence company had planned for growing about 10 times as big this year, only to reach a growth rate that could make it 80 times as big this year instead.”

“Mr. Amodei, 43, made his remarks at Anthropic’s annual developer conference in San Francisco, where he and other executives gave a glimpse into the company’s plans. Anthropic is one of the world’s leading A.I. start-ups with its Claude chatbot and its popular A.I. coding tool, Claude Code, which people can pay to subscribe to. Last month, Anthropic said its annual revenue run rate had surpassed $30 billion, up from $9 billion at the end of 2025.”

“At the conference, Mr. Amodei said Anthropic had been overwhelmed by the rate of growth, which has increased the company’s need for computing power to deliver its A.I. products to customers.”

And then a tech CEO who utters something seemingly heartfelt:

“I hope that 80-times growth doesn’t continue because that’s just crazy and it’s too hard to handle,” Mr. Amodei said. “I’m hoping for some more normal numbers.”

In the meantime, Anthropic is scrambling for AI Compute wherever it can find it. Yesterday, one source was rival SpaceX/xAI, yes the one led by Elon Musk and his xAI enterprise. It came in true Elon style. A deal of sheer convenience for both sides.

“To obtain more computing power, Anthropic has signed a series of deals with industry giants. At the conference, Anthropic said it had sealed an agreement with Elon Musk’s SpaceX to use all of the computing capacity from the rocket company’s Colossus 1 data center in Memphis. The move gives Anthropic access to the computing power of more than 220,000 Nvidia A.I. chips, the company said, and opens the door to working with SpaceX to create A.I. data centers in space.”

Note that while training its own Grok models, Colossus 1 was reportedly running 11% utilization, relative to 40%+ of ‘Model FLOPs utilization’ (aka MFU) utilization by peers training LLM models. So Elon did have spare capacity to rent to defray costs. It used it recently for the Cursor AI coding company $60 billion deal of mutual convenience a few days ago.

And Anthropic needed it the extra SpaceX/xAI capacity in spades.

“As you saw today with the SpaceX compute deal, we’re working as quickly as possible to provide more compute than we have in the past,” Mr. Amodei said, using an industry term for computing power. He added that his company was working every day “to obtain even more compute” for users.”

“With the SpaceX deal, Anthropic said, it can expand the amount of coding that some Claude Code subscribers can do before they hit a usage limit with the tool. Anthropic offers people different pricing depending on the amount of coding they want to do.”

“Last month, Google, which has been a longtime investor in the start-up, committed to invest as much as another $40 billion in Anthropic. Amazon, another investor in Anthropic, agreed to invest as much as $25 billion.”

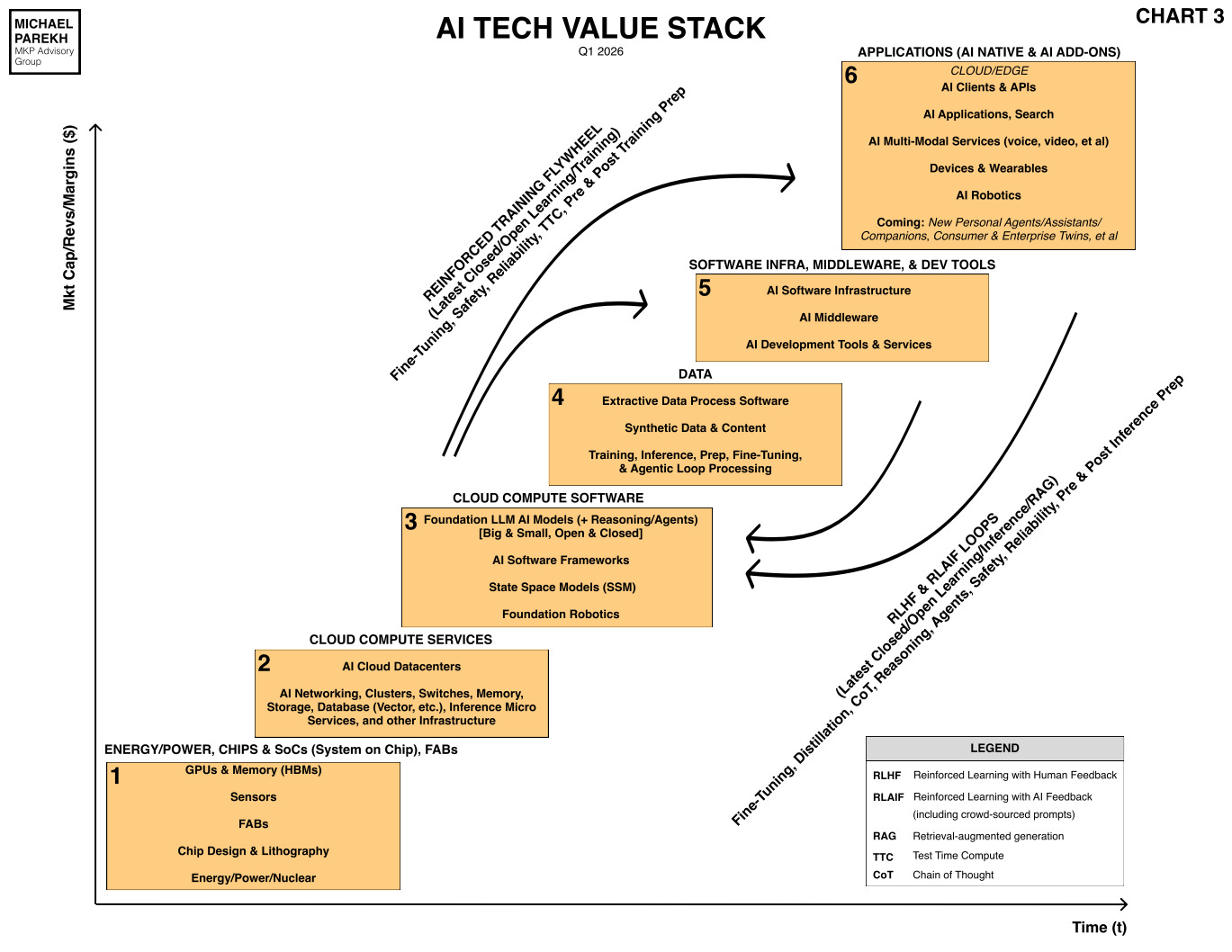

The larger point remains that Anthropic, and its competitor OpenAI are both facing high class problems as this AI Tech Wave moves from AI chatbots to AI Agents on the AGI roadmap.

All due to the multiples of demand that the latter leads to, especially with developers and enterprises.

Consumer demand for AI Agents may take a little longer as I discussed yesterday, but remains an additional gear to notch up down the road.

For now it implies pricing power up and down the AI tech stack. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)