AI: Anthropic's peek-a-boo of Claude Mythos, its next frontier model. AI-RTZ #1051

I’ve maintained for months now that Anthropic is aggressively executing on its AI opportunities ahead of OpenAI especially in the enterprise. As both race towards optimistic IPOs this year. The sibling companies are currently neck and neck, even though OpenAI has long been the Coke to Anthropic’s Pepsi.

But things seem to be turning around a bit as I’ve noted.

Particularly in Anthropic’s revenue ramp vs OpenAI this year. This despite the government backed headwinds on defense issues for Anthropic vs OpenAI.

It’s been known for a while that all the top LLM AI companies are readying their next biggest and best models. Anthropic’s brand as meticulously crafted by founder/CEO Dario Amodei, has long been burnished by its veneer of safety and AI responsibility.

So it’s now a surprise how Anthropic is choosing to introduce its next generation AI model, dubbed ‘Mythos’. The next iteration of its Claude family of products (Code, Cowork, etc.).

Concurrently, Anthropic also rolled out Project Glasswing, aimed at ‘Securing Critical software for the AI era’. Anthropic also discussed Claude Mythos Previews’ cybersecurity capabilities.

Both are notable for the AI Tech Wave going forward, and will see efforts with peers like OpenAI and others. And are already unleashing a lot of discussion on the internet.

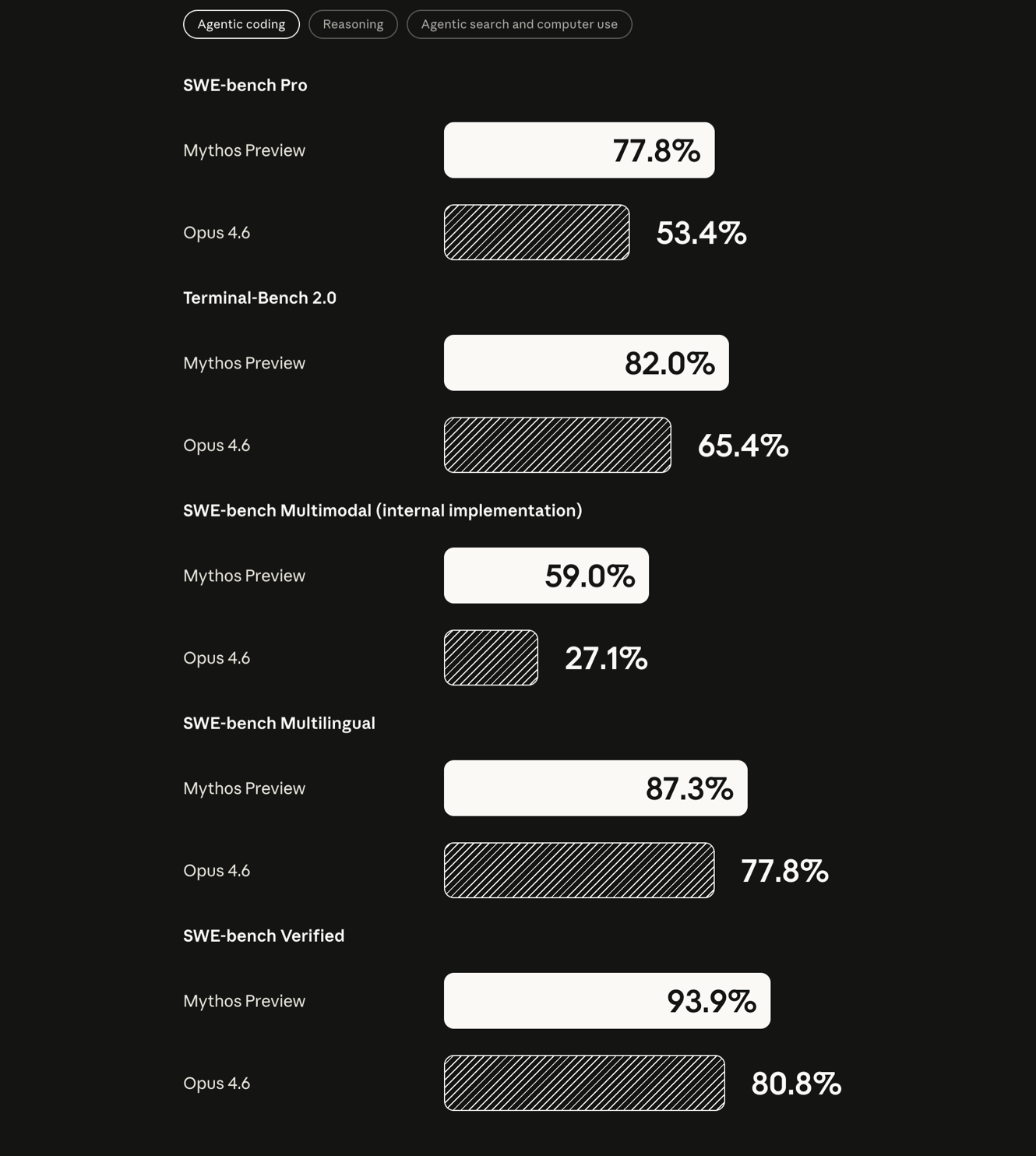

Right up front, want to address one element that Mythos is better than Anthropic’s current top of the line Opus 4.6.

As Ben Thompson of Stratechery summarizes the performance differences:

“The big gain here is a massive improvement to the base model; while Anthropic hasn’t released details of Mythos’ training, this is likely the first frontier lab base model that was trained on Nvidia’s Grace Blackwell NVL72 architecture. It will be very interesting to compare these numbers — particularly the relative improvement — to OpenAI’s next “Spud” model. The first new model of this latest generation was actually Gemini 3, but Google’s post-training and harness simply isn’t competitive. Mythos is the first real proof point that there are still returns to pre-training scale for state-of-the-art models.”

And the company is charging for that difference.

“It’s also extremely expensive: Mythos Preview will cost $25/$125 per million input/output tokens, 5x Opus 4.6’s $5/$25, which is already substantially more expensive than GPT-5.4 ($2.5/$15). This raises an obvious question: how much of Anthropic’s reluctance to make Mythos widely available is due to security concerns, as opposed to the more prosaic reality that Anthropic simply doesn’t have enough compute?”

He also highlights Anthropic and Dario Amodei’s long time habit of using security and safety threats of its new models to burnish its reputation for the same:

“I also believe that Anthropic has a multi-year habit of disaster-porn-as-marketing-tool. To that end, I suspect we’ll get a distilled version of Mythos that is viable to serve whenever OpenAI shows up with Spud; Amodei has shown that, despite it all, he is a capitalist, particularly when the competition is OpenAI.”

The distillation is important especially as Chinese AI companies are suspected of distilling (i.e., training off bigger models), for their own next generation AI models. Which are mostly open sourced.

Ben continues on the Anthropic security burnishing issue:

“At the same time, the conflict I highlighted in that Article is looming, and the extent to which Anthropic seems to be inviting its apotheosis through its habit of casting itself as the world’s bearer of doom suggests the company still isn’t taking this particular alignment danger seriously.”

All that saie, on to the cybersecurity issues around Mythos, and thus the Glasswing alliance.

The NY Times’ Kevin Roose discusses it as “Anthropic Claims Its New A.I. Model, Mythos, Is a Cybersecurity ‘Reckoning’”:

“The company said on Tuesday that it was holding back on releasing the new technology but was working with 40 companies to explore how it could prevent cyberattacks.”

“Anthropic, the artificial intelligence company that recently fought the Pentagon over the use of its technology, has built a new A.I. model that it claims is too powerful to be released to the public.”

“Instead, Anthropic said on Tuesday, it will make the new model — known as Claude Mythos Preview — available to a consortium of more than 40 technology companies, including Apple, Amazon and Microsoft, which will use the model to find and patch security vulnerabilities in critical software programs.”

“Anthropic said it had no plans to release its new technology more widely, but was announcing the new model’s capabilities in one area in particular — identifying security vulnerabilities in software — in an effort to sound the alarm over what the company believes will be a new, scarier era of A.I. threats.”

“The goal is both to raise awareness and to give good actors a head start on the process of securing open-source and private infrastructure and code,” Jared Kaplan, Anthropic’s chief science officer, said in an interview.”

And discussed Glasswing:

“The coalition, known as Project Glasswing, will include some of Anthropic’s competitors in A.I., such as Google, as well as hardware providers like Cisco and Broadcom, and organizations that maintain critical open-source software, such as the Linux Foundation. Anthropic is committing up to $100 million in Claude usage credits to the effort.”

“Logan Graham, the head of an Anthropic team that tests new models for dangerous capabilities, called the new model “the starting point for what we think will be an industry change point, or reckoning, with what needs to happen now.”

The crux of these developments is the acceleration of cybersecurity ‘whack-a-mole’ responses across the industry, as the NYTimes goes on to describe:

“Elia Zaitsev, the chief technology officer of CrowdStrike, a cybersecurity firm with access to the new model through Project Glasswing, said in a statement accompanying Anthropic’s announcement that the model “demonstrates what is now possible for defenders at scale, and adversaries will inevitably look to exploit the same capabilities.”

“What once took months now happens in minutes with A.I.,” Mr. Zaitsev said.”

“Project Glasswing takes its name from the glasswing butterfly, Mr. Kaplan said, which uses transparent wings to hide in plain sight. Similarly, he said, many of today’s most critical software programs contain bugs and vulnerabilities that have existed in the open for years, but were buried in such complex technical systems that no human ever found them.”

And these model evolutions are just getting started for good and bad guys to respond:

“According to Mr. Kaplan, the cybersecurity capabilities of Claude Mythos Preview are not a result of special training. Rather, they are just one of many areas in which the model is better than previous ones. He predicted that similar cybersecurity capabilities would exist in other models soon. As that happens, he said, the arms race between hackers and the companies racing to defend their systems will only escalate.”

“As the slogan goes, this is the least capable model we’ll have access to in the future,” he said.”

All ominous and attention-grabbing stuff. As Anthropic sees record ARR revenues over $30 billion, lapping sibling rival OpenAI. As both race towards mega-IPOs later this year.

Prepping their finances accordingly.

So worth unpacking further.

Techcrunch lays it out in “Anthropic debuts preview of powerful new AI model Mythos in new cybersecurity initiative”:

“Anthropic on Tuesday released a preview of its new frontier model, Mythos, which it says will be used by a small coterie of partner organizations for cybersecurity work. In a previously leaked memo, the AI startup called the model one of its “most powerful” yet.”

“The model’s limited debut is part of a new security initiative, dubbed Project Glasswing, in which 12 partner organizations will deploy the model for the purposes of “defensive security work” and to secure critical software, Anthropic said. While it was not specifically trained for cybersecurity work, the model will be used to scan both first-party and open source software systems for code vulnerabilities, the company said.”

The company is basing its caution on early signals from the new version:

“Anthropic claims that, over the past few weeks, Mythos identified “thousands of zero-day vulnerabilities, many of them critical.” Many of the vulnerabilities are one to two decades old, the company added.”

“Mythos is a general-purpose model for Anthropic’s Claude AI systems that the company claims has strong agentic coding and reasoning skills. Anthropic’s frontier models are considered its most sophisticated and high-performance models, designed for more complex tasks, including agent-building and coding.”

So who’s involved in the next steps with Mythos?

“The partner organizations previewing Mythos as part of Project Glasswing include Amazon, Apple, Broadcom, Cisco, CrowdStrike, the Linux Foundation, Microsoft, and Palo Alto Networks. As part of the initiative, these partners will ultimately share what they’ve learned from using the model so that the rest of the tech industry can benefit from it. The preview is not going to be made generally available, Anthropic said, though 40 organizations will gain access to the Mythos preview aside from the partnership.”

And it seems Anthropic is being proactive with the government, despite recent kerfuffles over military applications.

“Anthropic also claims that it has engaged in “ongoing discussions” with federal officials about the use of Mythos, although one would have to imagine that those discussions are complicated by the fact that Anthropic and the Trump administration are currently locked in a legal battle after the Pentagon labeled the AI lab a supply-chain risk over Anthropic’s refusal to allow autonomous targeting or surveillance of U.S. citizens.”

These development follow a series of leaks of Anthropic IP, the scope of which is slowly coning into focus:

“News of Mythos was originally leaked in a data security incident reported last month by Fortune. A draft blog about the model (then called “Capybara”) was left in an unsecured cache of documents available on a publicly inspectable data lake. The leak, which Anthropic subsequently attributed to “human error,” was originally spotted by security researchers. “‘Capybara’ is a new name for a new tier of model: larger and more intelligent than our Opus models — which were, until now, our most powerful,” the leaked document said, adding later that it was “by far the most powerful AI model we’ve ever developed,” according to the report.”

“In the leak, Anthropic claimed that its new model far exceeded performance areas (like “software coding, academic reasoning, and cybersecurity”) met by its currently public models and that it could potentially pose a cybersecurity threat if weaponized by bad actors to find bugs and exploit them (rather than fix them, which is how Mythos will be deployed).”

“Last month, the company accidentally exposed nearly 2,000 source code files and over half a million lines of code via a mistake it made in the launch of version 2.1.88 of its Claude Code software package. The company then accidentally caused thousands of code repositories on GitHub to be taken down as it attempted to clean up the mess.”

Overall, this puts the ball into OpenAI’s court, along with the other LLM AI peers, Meta, Elon’s xAi, and others.

As mentioned above, they have to now articulate their release strategy for their upcoming model, reportedly with ‘extreme reasoning’. Especially as OpenAI approaches a critical point in this AI Tech Wave, their anticipated IPO.

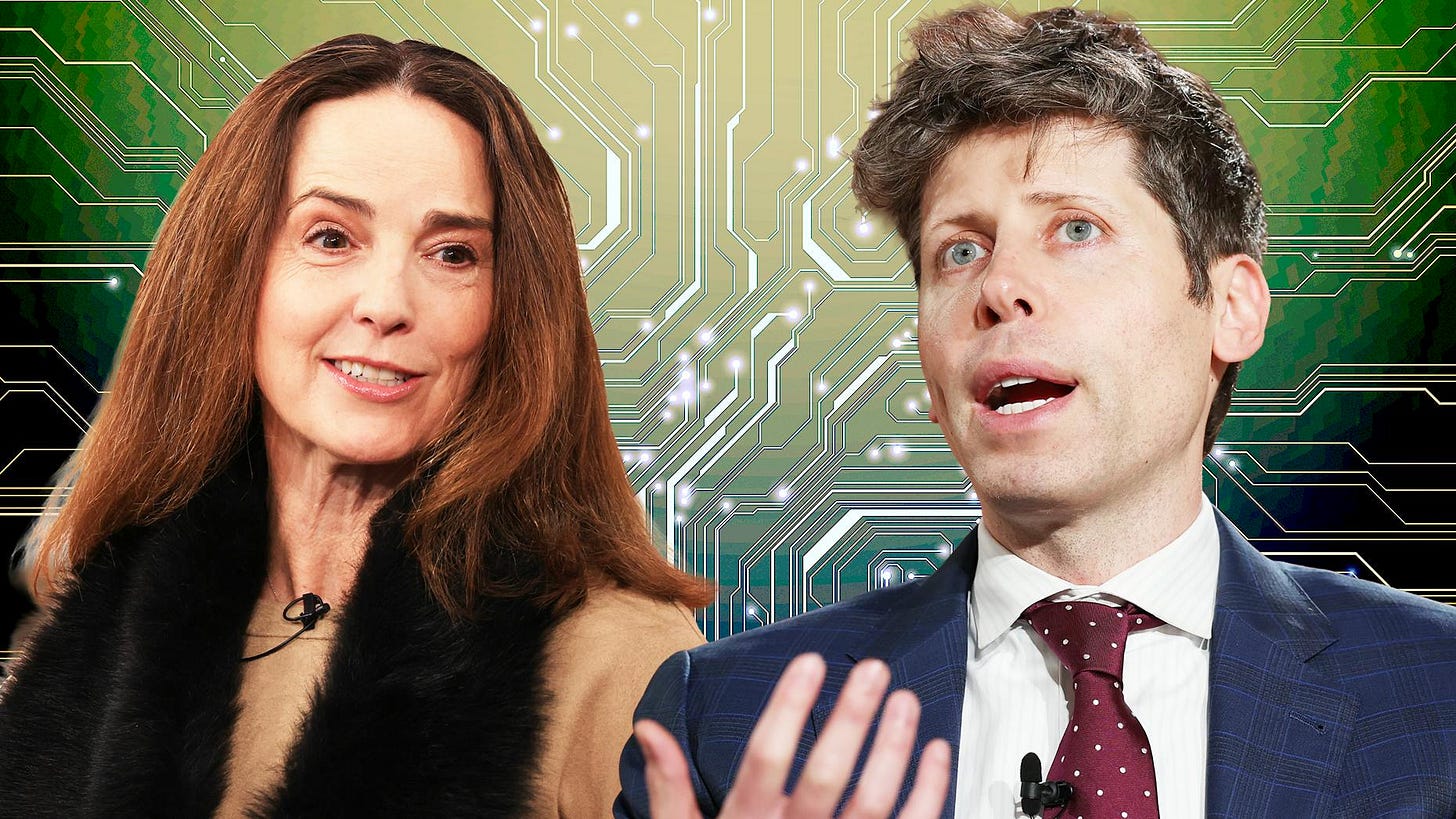

Where reports indicate some light between founder/CEO Sam Altman and CFO Sarah Friar.

Anthropic has begun making its moves this AI Tech Wave. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)