AI: Microsoft doubles up on LLM AI bundling. RTZ #1043

Two don’t always come for the price of one.

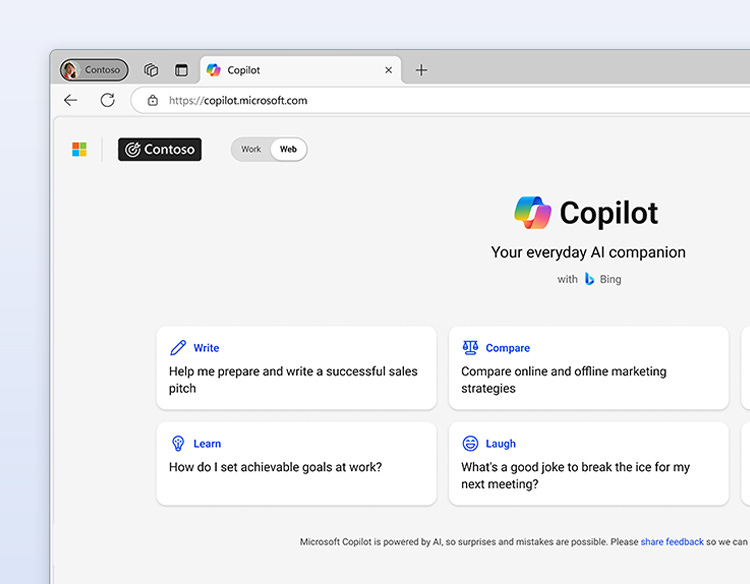

Microsoft is leveraging its close investment and partnerships with not just one, but TWO of the leading LLM AI companies. It’s now bundling both OpenAI and Anthropic’s LLM AI models and products in its Copilot Office 365 $99 “Superbundle” I wrote about a few days ago.

This time with a new wrinkle called ‘Critique’. it’s a clever way to leverage the two leading AI models to check on each others’ work and presumably deliver better results to some percentage of the 450+ million subscribers to Microsoft Office 365 globally, who sign up for Microsoft’s bundle. It’s likely a taste of things to come in this AI Tech Wave, where more core LLM AI products are bundled to work together.

Axios lays this out in “One AI isn’t enough anymore”:

“Microsoft has revamped one of its AI research tools to use models from both OpenAI and Anthropic, the clearest sign yet that the future of AI may be multi-model.”

“Why it matters: AI companies are increasingly pairing models together — having them cross-check and evaluate — in a bid to boost accuracy and reduce errors that any one model might miss.”

“Driving the news: The software giant is taking advantage of multiple models within its Microsoft 365 Copilot Researcher.”

“A new “Critique” layer uses Anthropic’s Claude to review answers generated by OpenAI’s model to improve accuracy before a user sees the response.”

“The company says that approach enabled the research agent to score 13.8% higher on the DRACO benchmark, an industry standard for deep research quality.”

“Another new option, called Model Council, allows users to see a side-by-side comparison of responses from different models.”

Microsoft goes on to amplify on the trend further, with future partners to come:

“What they’re saying: “It’s becoming very clear to us that there will be many models,” Microsoft executive VP Charles Lamanna told Axios. “Come summertime there will be many more models than just these two inside of Copilot.”

“The big picture: AI companies are experimenting with several different ways to use multi-models to complete tasks.”

“When you prompt ChatGPT, Copilot or other models, they will often use a smaller classifier model to route you to the model most appropriate for the task.”

“Perplexity has long allowed its users to choose from multiple models and see responses side-by-side.”

“Anthropic uses a self-critique step mid-generation to catch errors before surfacing a final response from Claude.”

For Microsoft, the move has the additional benefit of better post-OpenAI optics, a topic I’ve discussed at length.

“Between the lines: The multi-model system has an added benefit for Microsoft, which is looking to show it isn’t overly reliant on OpenAI.”

“With the frontier labs frequently leapfrogging one another, Lamanna said businesses are interested in AI tools that can easily change which models are running under the hood.”

This does mean more costs for end users for the two for the price of MORE:

“Yes, but: Using multiple models on a single query can lead to increased costs and slower response times.”

“Microsoft’s Model Council, for example, costs roughly 2.5 times as much as using a single model, while the Critique approach costs about 20% more.”

“That cost isn’t directly passed on given Copilot is a subscription service, but it does inform where Microsoft decides to use multiple models versus relying on a single algorithm.”

Microsoft of course is also building out its own models to complemet these new AI product bundles.

“What we’re watching: Microsoft is also building more homegrown models, and Lamanna said those models might show up first working in conjunction with outside models rather than as a full replacement.”

“It’ll be in one of these ensemble experiences,” he said.”

As growth analyst Aakash Gupta explains further,

“Microsoft just made Anthropic the quality inspector on OpenAI’s assembly line. And the reason has nothing to do with research quality.”

”M365 Copilot has 450 million commercial subscribers. 15 million pay for it. That’s a 3.3% conversion rate after two years and $150 billion in cumulative AI infrastructure spending.”

”The core problem is trust. When employees get access to both Copilot and ChatGPT, 76% choose ChatGPT. The hallucination risk on business documents is the single biggest objection CFOs raise at renewal. Microsoft needed a way to say “this output has been verified” without building the verification model themselves.”

”So they built Critique. GPT writes the research report. Claude reviews it for accuracy, completeness, and citation integrity before the user sees it. One model generates, another audits. Microsoft says it beats every standalone deep research tool on the DRACO benchmark by 13.8%.”

And of course they get to charge more for all the additional benefits of Copilot.

The question is if customers will bite:

”The wildest part: Microsoft is paying Anthropic to make OpenAI’s output trustworthy enough to charge $30/month for. And Anthropic is taking the money because being the default auditor inside 450 million enterprise seats is worth more than any benchmark win.”

”Microsoft said the workflow will eventually be bidirectional. Claude drafting, GPT reviewing. That tells you the endgame. The model becomes a commodity. The orchestration layer is the product. And Microsoft owns the orchestration layer.”

This is a trend we’re going to see a lot more of in this AI Tech Wave going forward.

More leading LLM AI models, both closed and open, bundled together in ‘orchestration layer’ applications that then deliver more value to end users to do complex things with AI models and agents.

In a secure and trusted way. And charge extra for the privilege. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)