AI: Nvidia GTC 2026 Jensen Keynote roadmaps $1 trillion in AI chip sales by 2027. RTZ #1028

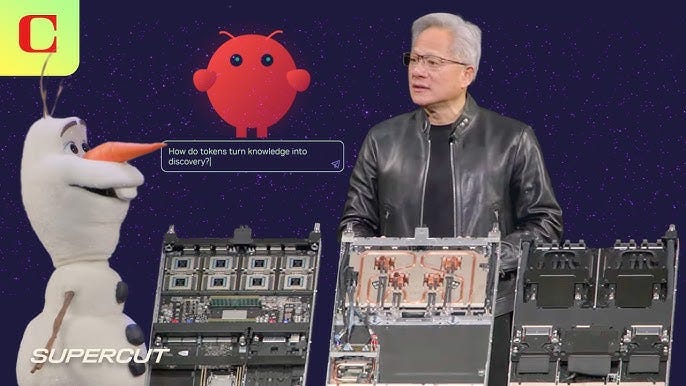

Nvidia’s GTC (GPU Technology Conference) 2026 kicked off today with the usual chockfull keynote by founder/CEO Jensen Huang.

Just as in prior years, the event was anticipated, attended and watched with almost as much anticipation and fervor as a Steve Jobs delivered WWDC (Worldwide Developer Conference) keynote in his day.

It’s as big a deal in this AI Tech Wave, as Apple WWDC keynotes have been for much of its 50 year history. And like the Superbowl, it even has its own ‘pregame’ show, kicking off the keynote. Providing an introduction to the rest of the week’s events for thousands of attendees in San Jose, CA. Let’s unpack this year’s keynote highlights. And calibrate the AI tech roadmap ahead led by Nvidia.

WSJ underlines the flagship takeaway by Jensen in “Nvidia’s CEO Projects $1 Trillion in AI Chip Sales as New Computing Era Begins”.

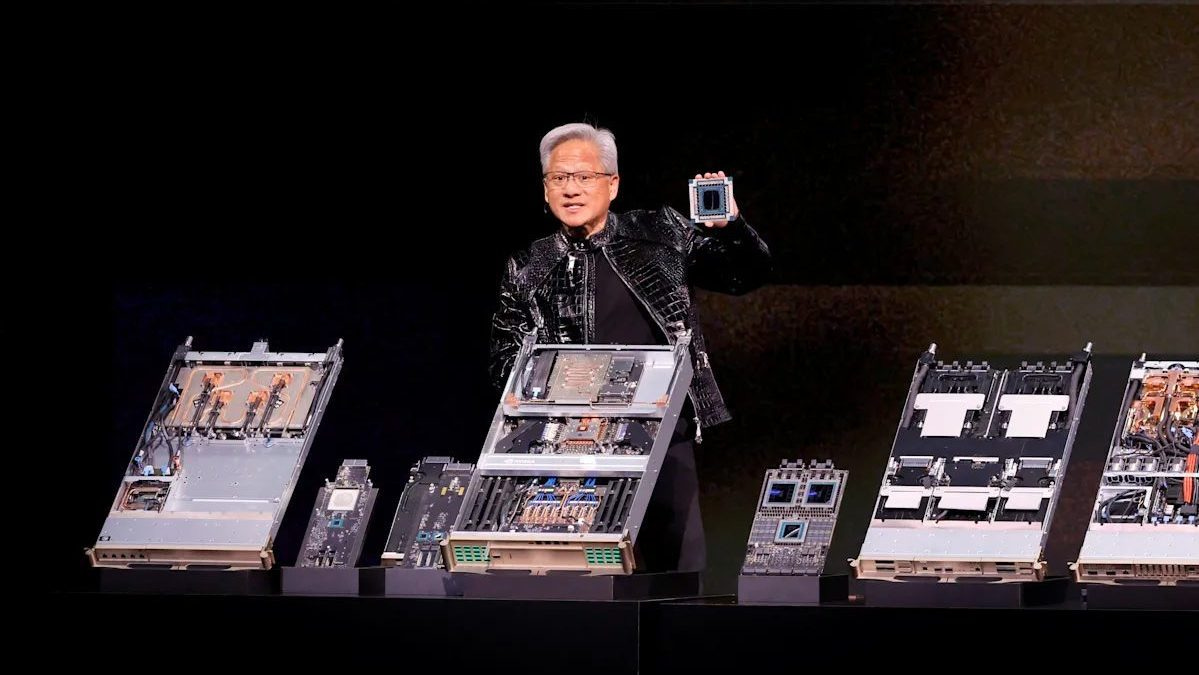

“Nvidia Chief Executive Jensen Huang unveiled the Nvidia Groq 3 LPX rack, a new server designed for AI inference, at the GTC conference.”

“Huang updated Nvidia’s sales guidance, expecting to sell $1 trillion worth of Blackwell and Rubin chips by the end of 2027.”

“Nvidia expanded its autonomous driving business, adding four new partners for its robotaxi computing system.”

Beyond the quick takeaways, some additional highlights:

“Chief Executive Jensen Huang ushered in the Age of Inference at the company’s annual GTC conference Monday, outlining a huge array of new products, both in hardware and software, geared toward running AI models more quickly and efficiently.”

There’s been a big debate over Nvidia’s abilityto adapt its AI GPU and AI Factory strategy beyond AI Training to AI Inference. I’ve long stated that this has been an artificial media straw man.

The reality of Nvidia’s product strategy has addressed this heads on, along with its recent $20 billion acquihire of inference chip company Groq a few months ago. That has already resulted in an expansion of Nvidia’s product road map that spans AI training to inference and everything in between.

“In front of a crowd of more than 30,000 at the SAP Center, home of the San Jose Sharks hockey team, Huang unveiled Nvidia’s new flagship product, which he said would revolutionize inference, the form of AI computing that allows models to respond to user queries.”

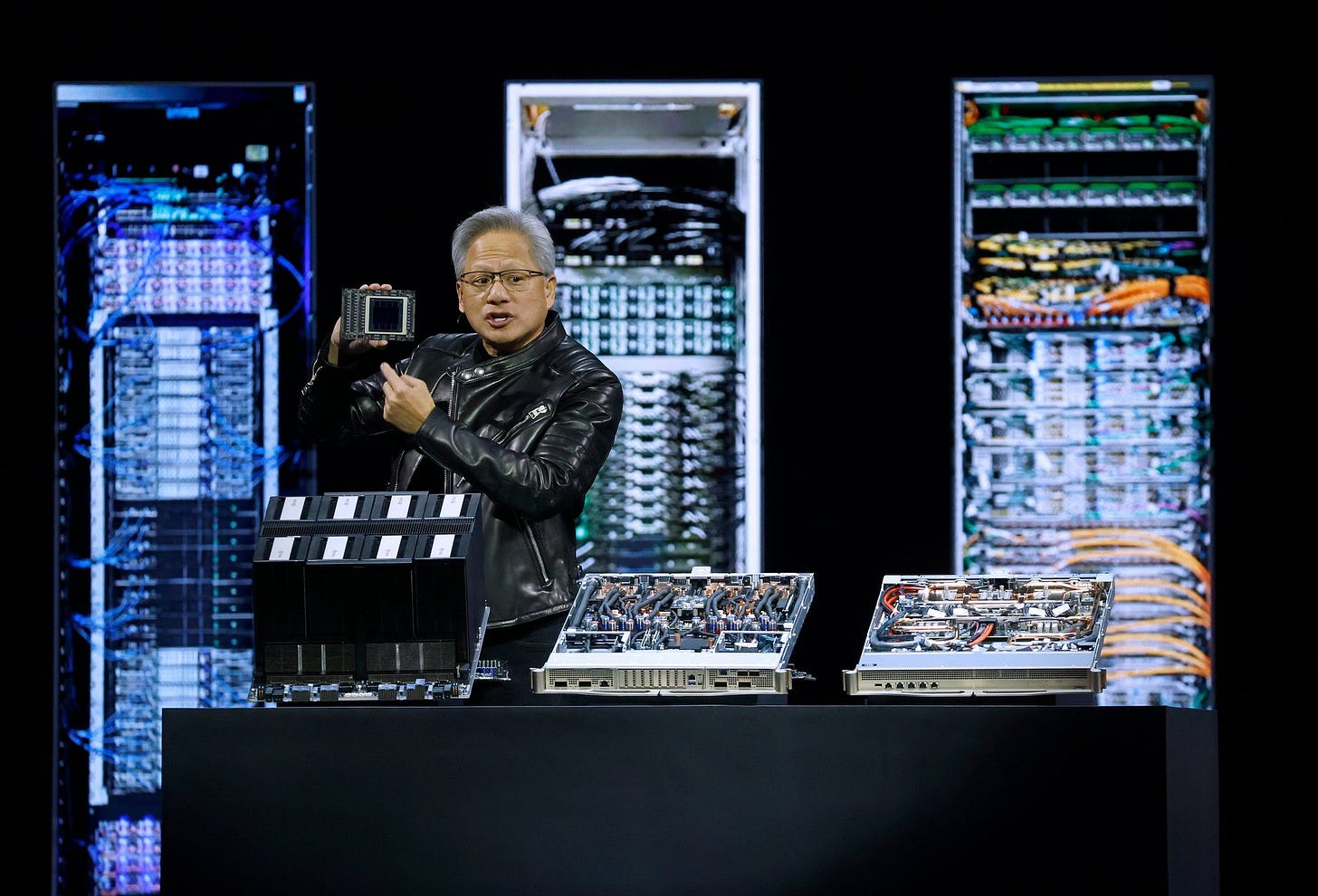

“For years, Nvidia has dominated the business of selling graphics processing units, or GPUs, the powerful chips used to train most large AI models. But over the last year, as AI companies have moved quickly to try to monetize their models and the AI tools built on top of them, customers have asked for better chips that are customized for inference computing, rather than training.”

“Known as the Nvidia Groq 3 LPX rack, Nvidia’s new servers will combine 72 of Nvidia’s next-generation Vera Rubin servers with 256 of a new chip called an LPU, or language processing unit, developed by Groq, a startup whose top leadership team Nvidia acquired in a $20 billion technology licensing deal in December.”

“This is the AI future. This is where AI wants to go,” Huang said. “It’s designed for inference, this one workload. And this workload is what drives AI factories.”

The name of the game going forward in AI is generating intelligence tokens for inference, at ever higher efficiency in terms of cost per watt.

“Nvidia said that this new system can generate 700 million tokens—the basic unit of computing measurements—per second, a rate of computing that’s 350 times as fast as Nvidia’s second-to-last generation of GPUs, known as Hopper.”

“Huang has been signaling for most of the last year that Nvidia would increasingly focus on inference computing going forward. The company’s traditional GPUs have typically not been regarded as ideal for inference, because they consume a huge amount of energy and don’t come with enough attached memory to allow models to access the troves of data on which they were trained.”

A key driver of this efficiency is higher memory in the AI systems:

“The new Vera Rubin and Groq combined servers will have 500 times as much high bandwidth memory as the Hopper generation, helping solve the memory bottleneck.”

“The inference inflection has arrived,” Huang said in his keynote speech. “This is the secret sauce.”

And then something for the financial markets to hold their attention:

“Huang said Nvidia expected to sell $1 trillion worth of Blackwell and Rubin chips by the end of 2027, updating earlier guidance that had the company selling $500 billion worth by the end of 2026.”

Also notable was Nvidia’s ‘NemoClaw’ partnership for a more secure version of OpenAI’s OpenClaw open source AI Agents platform.

Not to mention a ‘Space-1 Vera Rubin’ AI compute module to go with Elon Musk’s ambitions for AI Space Data Centers.

Nvidia also showed an ever expanding array of horizontal and vertical partnerships across industries spanning a dizzying array of AI applications.

“Huang used the speech to announce a host of partnerships aimed at bolstering Nvidia’s business in designing “digital twins” and other types of simulations. The company also announced a coalition of software companies, including Cursor, Mistral, Perplexity, Reflection and Thinking Machines, aimed at making it easier to develop frontier open-sourced AI models.”

“The coalition’s work would put the development of enterprise software tools into hyperdrive, Huang said, helping speed the transformation of the world’s software-as-a-service industry into an agentic-AI-as-a-service industry.”

Relatively under-appreciated in my view is Nvidia’s growing foot print in the global ‘self driving’ vehicle business, with most of the major auto companies around the world including BYD in China. And an expansion of partnerships with ride-share leaders like Uber.

“Nvidia also announced an expansion of its autonomous driving business, including the addition of four new partners for Nvidia’s robotaxi computing system—India’s BYD, China’s Geely Auto, Hyundai and Nissan. Using Nvidia’s chips and simulation models, the auto manufacturers are expected to significantly increase the number of autonomous ride-share vehicles on the road, Huang said.”

And then of course a stage full of over a hundred robots, both humanoid and not, leveraging Nvidia’s hardware and software AI platforms.

“Toward the end of the presentation, a robotic version of Olaf, the snowman from Disney’s “Frozen” animated franchises, designed by a partnership between Nvidia, DeepMind and Disney, ambled onstage and had a stilted conversation with Huang about the company’s Omniverse division, which develops physical AI products for things like robots.”

The whole keynote is worth a watch. Especially the end summary animation video with a cartoon Jensen around a campfire with robots singing recap complete with guitar and harmonica.

I’ll have more on Nvidia’s AI product roadmap in coming posts. For now, it’s safe to say Nvidia continues to have an impressive AI roadmap for this AI Tech Wave ahead. AI Inference and all. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)