AI: Nvidia's dense announcements at GTC 2026 worth a trillion+ by 2027. (part 2) RTZ #1031

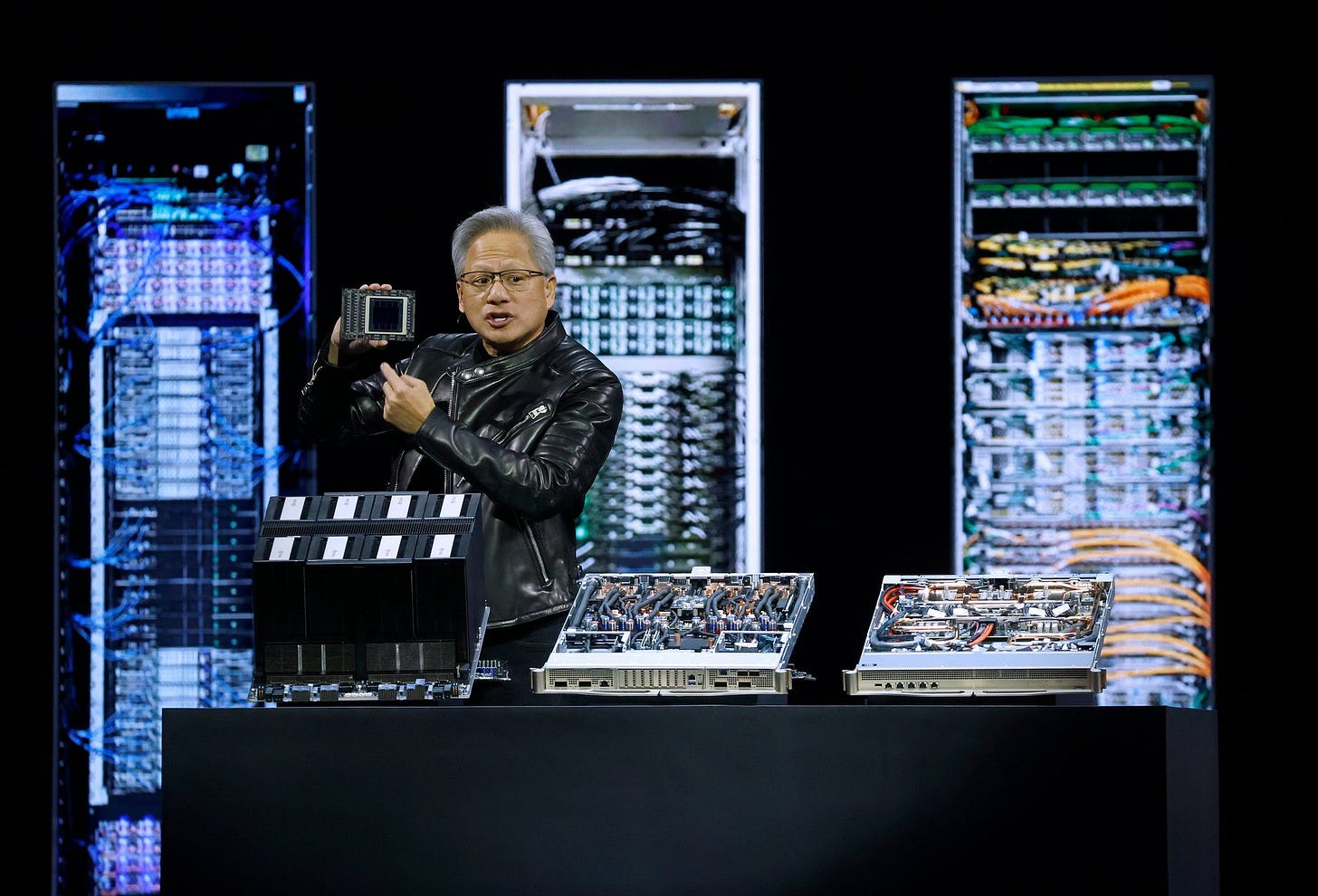

Talking with folks after Nvidia’s keynote kickoff at GTC 2026, it’s clear that many are having some difficulty seeing through the dense foliage of very technical announcements by founder/CEO Jensen Huang.

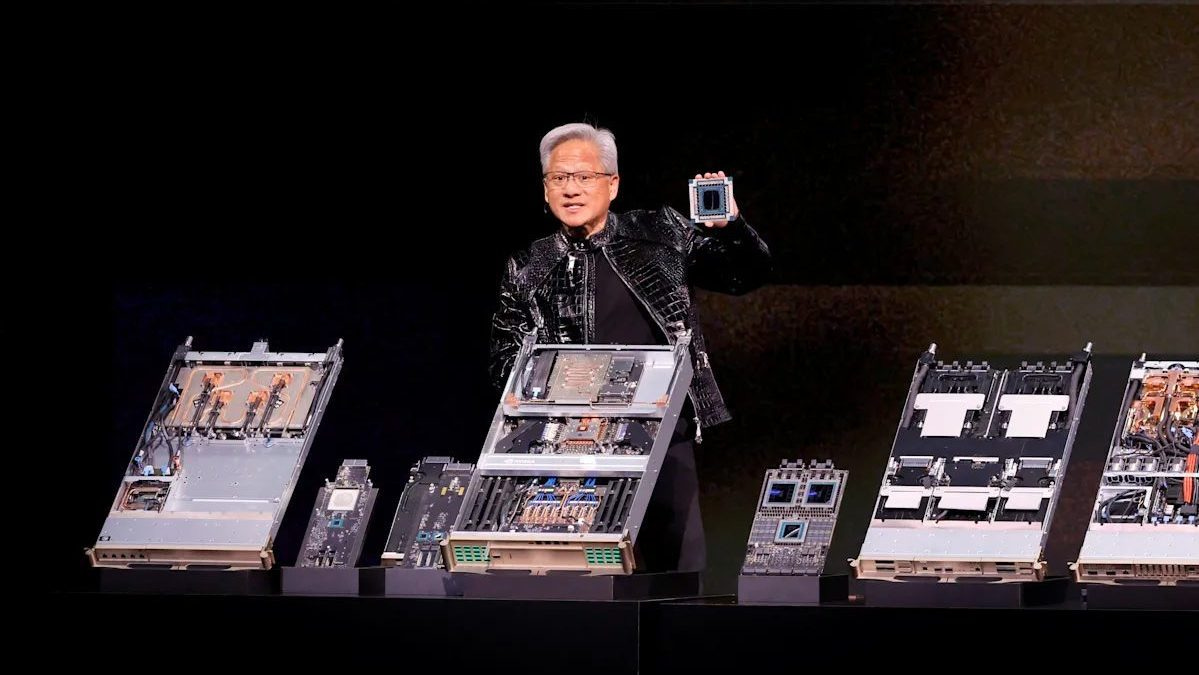

In my discussion of Nvidia founder/CEO Jensen Huang’s GTC 2026 keynote on its product roadmap, I highlighted the breadth and depth of improvements in the company’s hardware/software platforms. All being upgraded at an unprecedented pace in terms of both token performance and economics.

Especially impressive were its productization of its recent $20 billion Groq AI Inference technologies into products shipping this year. An unprecedented expansion of its industry leading AI platforms in just the fourth year of this AI Tech Wave since OpenAI’s ChatGPT launched in late 2022.

Today in part 2 going through the implications of Nvidia’s 2026 GTC, and help see through the dense foliage of the announcements. I’d like to flesh out why the scope of Nvidia’s product sets across training and inference, and into AI Agents both in the cloud and local devices via its NemoClaw offerings.

As well as its ever improving ‘Physical AI’ platforms for self driving cars and robots, are all leverling up faster than the markets may yet have digested.

Implicator AI lays out the Nvidia announcements well in “Nvidia Unveils Seven-Chip Vera Rubin Platform and $1 Trillion Order Book at GTC”:

“Jensen Huang held up a chip on Monday. The seven-chip platform behind it matters more than any single processor.”

“Nvidia’s GTC 2026 keynote revealed Vera Rubin, a platform of seven chips working as a single system: the Vera CPU, Rubin GPU, NVLink 6 Switch, ConnectX-9 SuperNIC, BlueField-4 DPU, Spectrum-6 switch, and the Groq 3 LPU. All seven are in production. Nvidia paid $20 billion for Groq barely two months ago. The chip already ships.”

Then the company announced additional product initiatives atop its new chip driven foundations in its AI data center compute systems that drive the token factory:

“The real play sits above the silicon. NemoClaw, Nvidia’s enterprise distribution of the OpenClaw agent framework, installs with a single command and bundles Nemotron models, the Dynamo inference engine, and OpenShell security runtime. Cisco, CrowdStrike, Google, and Microsoft signed on. The dominant open-source agent platform now defaults to Nvidia hardware at every layer.”

All of that drives Jensen’s conviction on doubling its customer orders by year end 2027, an announcement that the market is still digesting. Along with its products in the self driving car, robots and space fronts.

“Huang projects $1 trillion in purchase orders through 2027, double last year’s forecast. Roche deployed 3,500+ Blackwell GPUs for drug discovery. Uber plans Nvidia-powered robotaxis in 28 cities by 2028. The Space-1 Module delivers 25x more AI compute for orbital data centers.”

“Why This Matters:”

“Nvidia now controls every layer from silicon to agent platform, making the software lock-in harder to escape than the hardware dependency”.

“The Nemotron Coalition with Mistral, Cursor, LangChain, and Perplexity co-developing open models on DGX Cloud extends the CUDA ecosystem into model development itself”.

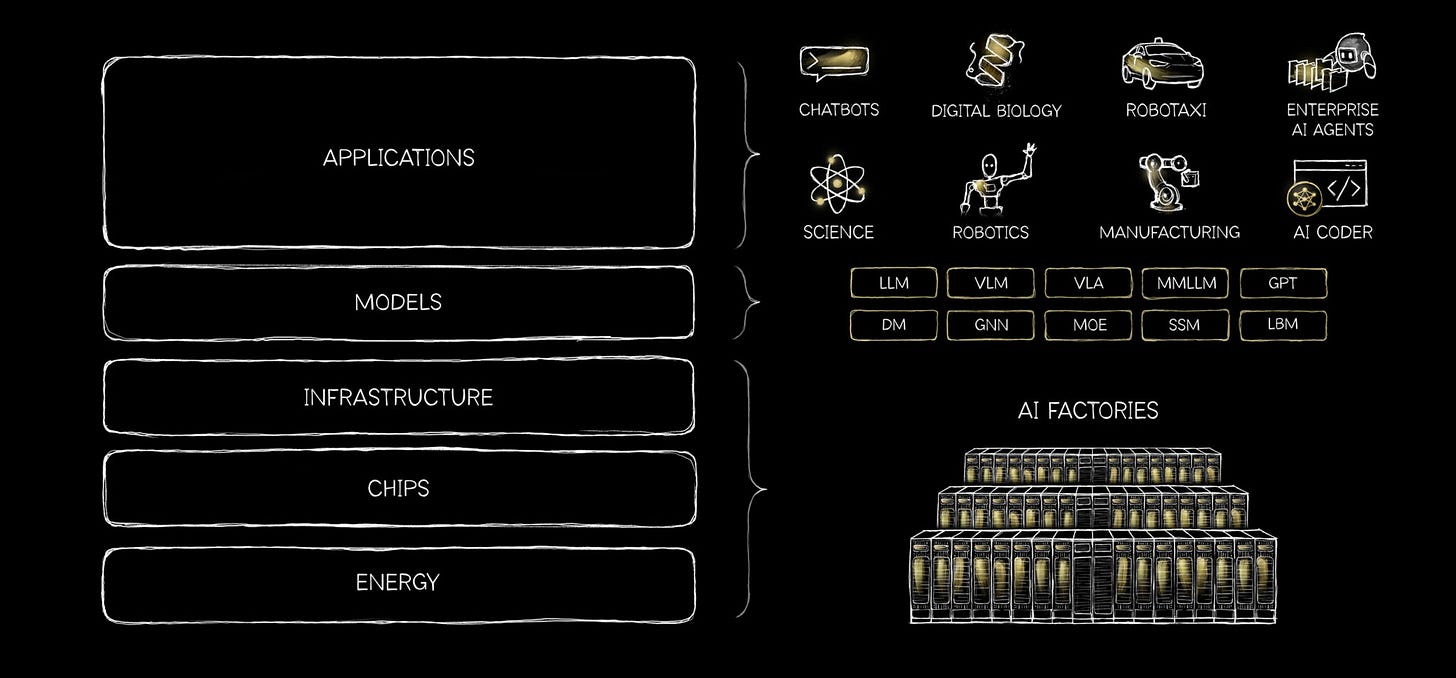

What they’re referring to above, is how Nvidia constantly discusses its vertical stack integration, and how it executes up and down its ‘5 layer AI Cake’ stack.

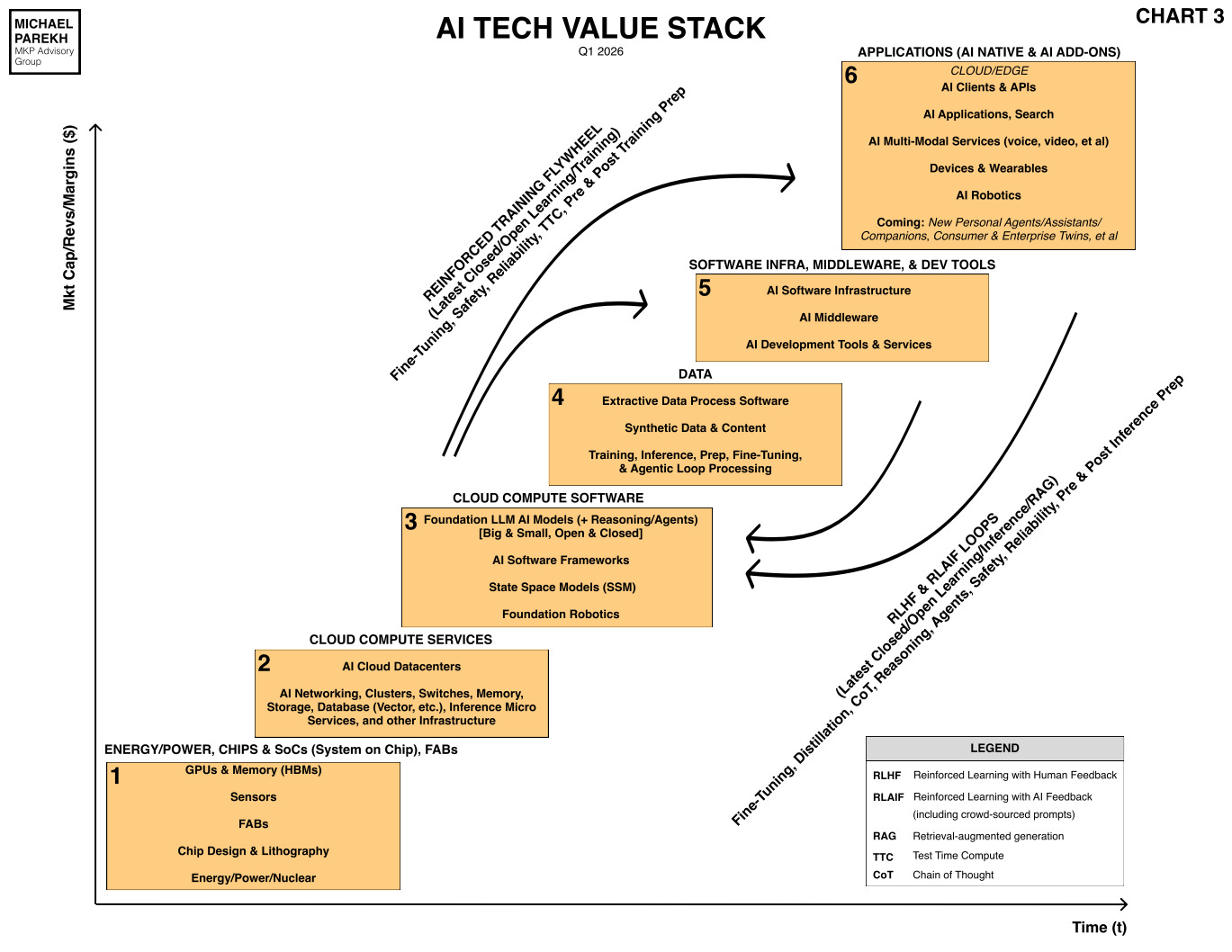

I like to extend it with my ‘6 layer AI Tech Stack staircase’, which includes the Data Box at layer 4, and the reinforcement training and inference learning loops that produce the inference tokens. And do so across time on the x-axis, and financial performance on the y-axis.

Nvidia’s product sets, both hardware, and open source software libraries like CUDA and others, are increasingly at the heart of all AI deployments globally, both digital and physical.

And they’re providing the tools at scale then then offer its customers to build incremental value on top if it all, at a breathtaking pace and scale. Up and down the supply chain. While Nvidia leverages its supply chain leverage up and down the tech stacks as well, all the way to the chip foundries at TSMC, SK Hynix, Samsung and others.

With new Nvidia product sets that are set to turbo-charge new AI chatbot, reasoning, agentic and a whole host of heretofore not possible AI applications and services by end users.

Those hardware pictures above, and his ever expanding open source AI software moats, is what give Jensen the conviction to cite a trillion dollars in AI demand for his products through 2027 at least.

That’s what makes the dense Nvidia announcements at the 2026 GTC so key to how this AI Tech Wave rolls on at least through the end of this decade. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)