AI: Nvidia's Reign accelerates in 2026 and beyond. RTZ #1009

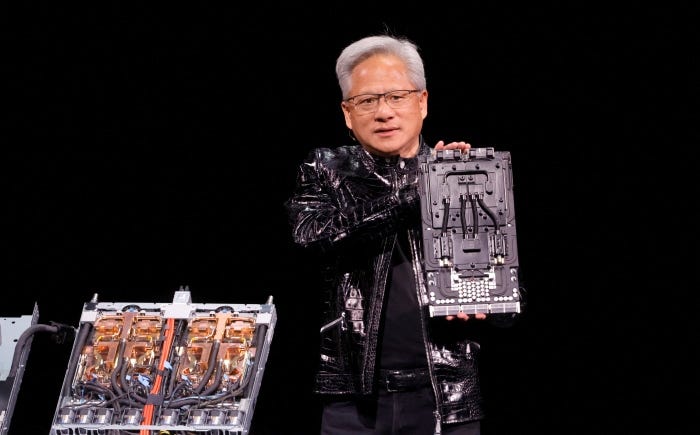

Once again, Nvidia reported better than expected Q4 and full fiscal year2026 results. Across key metrics like revenues, EPS, free cash flow, and gross margins, CEO Jensen Huang walked through how the company experienced a strong quarter and year-end across key financial and operational metrics.

One quote that resonated, is that “Computing demand is growing exponentially — the agentic AI inflection point has arrived”.

And overall, he added “Blackwell sales are off the charts, and cloud GPUs are sold out”.

I summarized my take on the high level numbers on Stocktwits as follows:

Also encouraging for the markets were gross margins coming in at in mid-70% range, and guidance on that metric and revenues remaining ahead of analyst expectations. All of that buoyed the stock a few percentage points.

As the WSJ summarized it in “Nvidia Beats Back Bubble Fears With Record $68 Billion in Sales in Fourth Quarter”:

“‘Computing has changed,’ CEO Jensen Huang said, citing agentic AI as driver of 94% profit surge.”

It’s useful to hear it in Jensen’s words from the piece:

““The simple way to think about it is, computing has changed,” Nvidia Chief Executive Jensen Huang said on Wednesday’s earnings call with investors. “In this new world of AI, compute equals revenues…I am certain at this point that we’ve reached the inflection point” where agentic AI is upending how business is done across the world, and selling AI tools is starting to generate real profits.”

“With each passing quarter, the pressure grows on Nvidia—which at a market value of nearly $5 trillion is the world’s largest publicly traded company—to beat Wall Street’s expectations.”

“It’s no longer enough for Nvidia to produce good quarterly results,” said Daniel Newman, chief executive of tech research and advisory firm Futurum Group. “They have to produce perfect quarterly results.”

It’s why I call it the AI “God-Stock”.

But it doesn’t mean it all comes easy.

The company had to execute through a formidable set of issues, including half of its business coming from core ‘hyperscaler’ companies who are ‘Frenemies’ at best, and working hard at building their own AI chips vs using Nvidia’s for now:

“In recent months, investors have sent tech stocks on a wild ride as worries about the AI trade have risen, then subsided, only to resurface. Nvidia’s share price fell as low as $170.94 in mid-December but has recovered to over $196.”

“The biggest buyers of Nvidia’s chips include ChatGPT-maker OpenAI, Oracle, Microsoft, Meta Platforms, Google parent Alphabet and Amazon.com. In recent months, investors have grown concerned over OpenAI’s fundraising abilities and rising competition from other chip designers, including Google and makers of custom chips.”

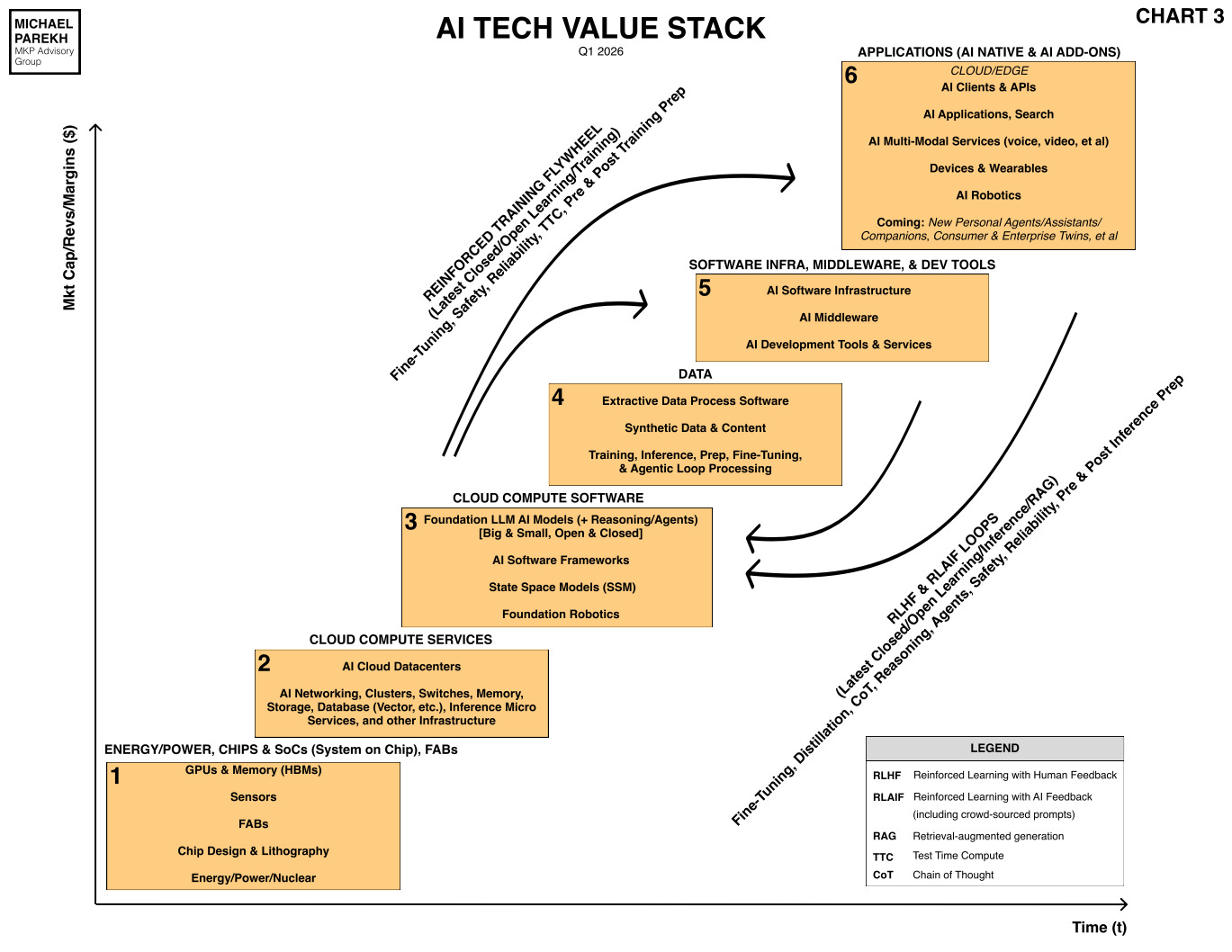

Then there’s the transition question of AI loads shifting from training to inference. The top loops in my AI Tech Stack chart below, needing magnitudes of more AI compute for the bottom inference computing loads:

“A factor that will likely shape Nvidia’s fortunes will be the transition from AI-model training to inference, the process by which AI tools respond to queries. Training and inference require different types of computing, and as a result, different hardware.”

“Nvidia has for years dominated the training market with its graphics processors, known as GPUs—powerful chips capable of performing billions of simple tasks simultaneously. As more tech companies deploy AI tools in the real world, demand is expected to shift from training to inference, which relies more heavily on central processing units, or CPUs, a simpler type of data center chip that more companies are capable of designing.”

“Last week, Nvidia announced a partnership with Meta that included its first major deployment of CPUs that aren’t connected in servers to GPUs, a sign that customers such as Meta need more inference-computing infrastructure to run their AI tools and other applications.”

And much is riding in investors’ minds, especially as they digest the $700+ billion in AI Data Center and Power spending by the ‘Mag 7s’ and OpenAI/Anthropics of the world this year alone. This question of course came up in the Q&A, and it’s useful to take a closer look at Nvidia’s response.

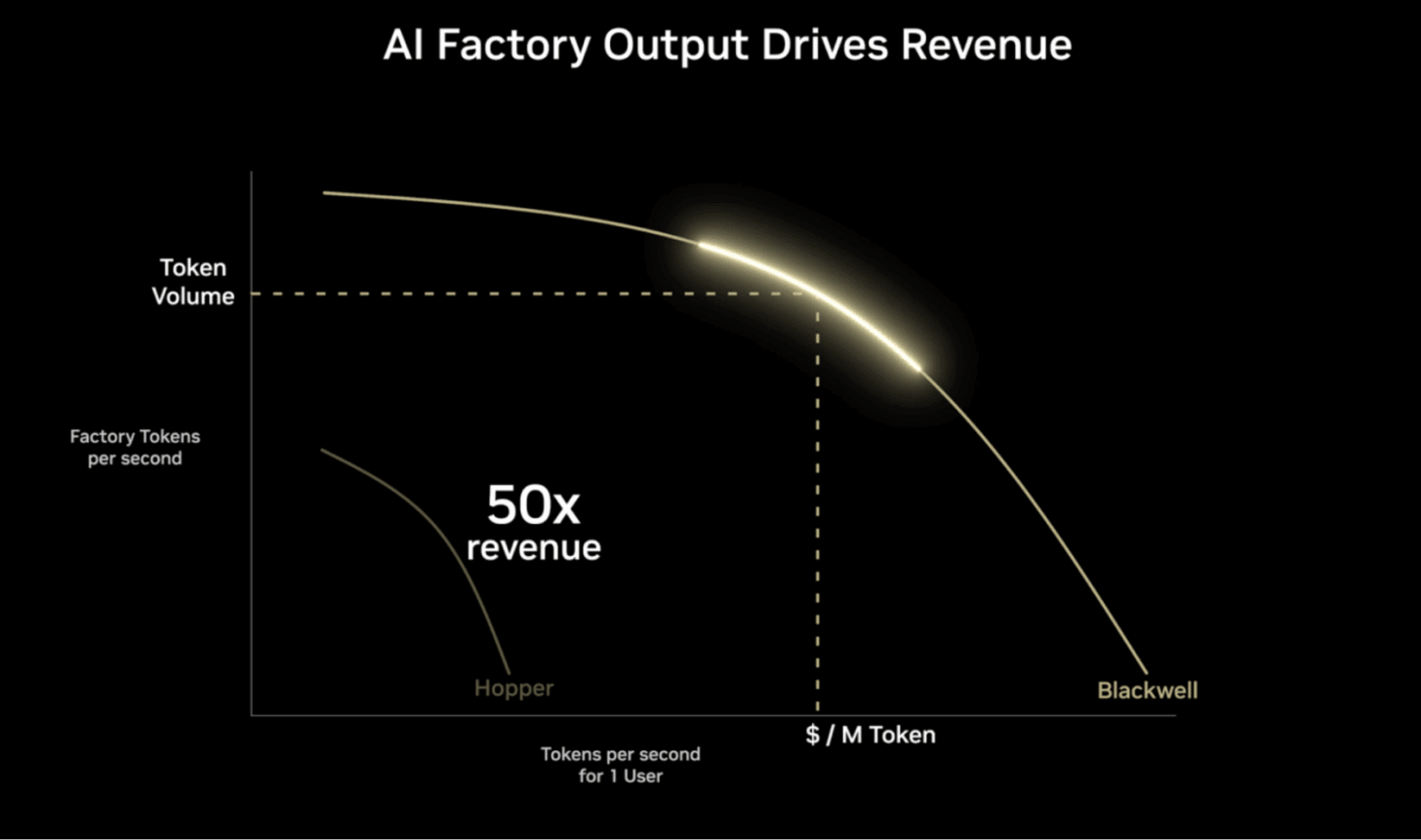

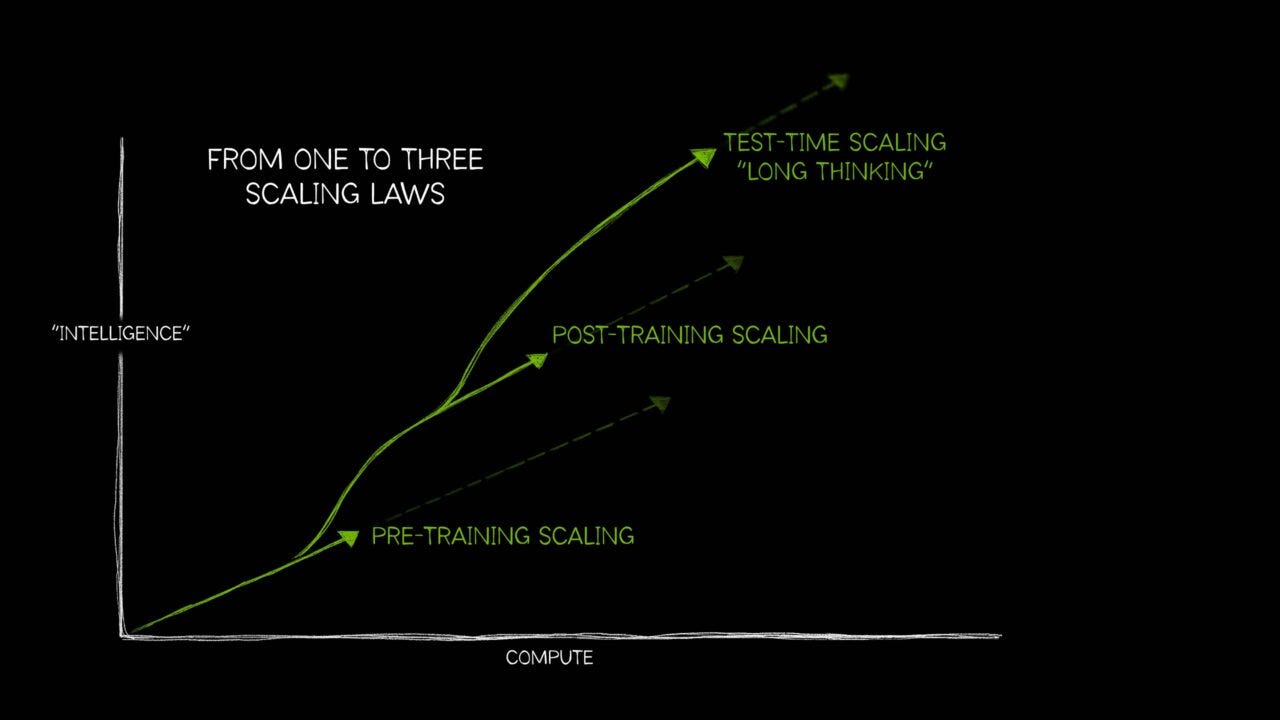

It highlighted a key variable ahead Jensen used a lot in the call, AI intelligence “tokens per unit of energy” of AI Compute.

This is critical due to Power being one of the critical inputs in short supply through 2030, besides others like AI GPUs, memory chips, AI Talent and others I’ve discussed at length in these pages.

Here’s how Jensen describes the importance of that metric from the transcript of the earnings call, in the context of the broader AI Capex spend. All in response to a timely question from the Goldman Sachs Analyst:

“Jim Schneider: Jensen, you have previously outlined the potential to get to $3 to $4 trillion of data center CapEx by 2030, which implies a potential acceleration in growth rates, which you have guided to at least this next quarter. What are some of the key application areas most likely to drive that inflection? Is that physical AI, agentic, or something else? And do you still feel good about that $3 to $4 trillion envelope?”

“Jensen Huang: Let us back that up and just reason through it from a few different ways. So the first way is on first principles. The way that software is done in the future using AI is token-driven. And I think everybody talks about tokenomics and talks about data centers generating tokens, and inference is about generating tokens, and we generate tokens. You know, we are just talking about tokens. How NVIDIA Corporation’s NVLink 72 enabled us to generate tokens at 50 times better performance per unit energy than the previous generation. And so token generation is at the center of almost everything that relates to software in the future and relates to computing.”

Then he goes into the critical difference between software as it’s been done for decades, vs how it is going to be done going forward, constantly using AI tokens. A major shift for the first time since the transistor started to do its computing thing in 1947:

“If you look at the way we use computing in the past, however, the amount of computation demand for software in the past is a tiny fraction of what is necessary in the future. And AI is here. AI is not going to go back. AI is only going to get better from here. And so if you think about it, you said, okay, well, the world was investing about $300,000,000,000 to $400,000,000,000 a year in classical computing. And now AI is here. And the amount of computation necessary is a thousand times higher than the way we used to do computing. The computing demand is just a lot higher.”

“And so if we continue to believe there is value in it, and we will talk about that in a second, then the world will invest to produce that token. And so the amount of token generation capability that the world needs is a lot more than $700,000,000,000. And I am fairly confident that we are going to continue to generate tokens. We are going to continue to invest in compute capacity from this point out. And fundamentally because every single company depends on software, every software will depend on AI. And so every company will produce tokens. And that is the reason why I call them AI factories.”

He then goes into his favorite words he’s used for Nvidia for some time now:

“And whether you are a company in the cloud data centers, you have AI factories to generate tokens for your revenues. If you are an enterprise software company, you are going to generate tokens for the systems that are on top of your tools. If you are a robotics factory—and self-driving cars, first indication of that—you have huge supercomputers, which are basically AI factories, to generate tokens that goes into your cars, that becomes its AI. And then you also have to put computers inside the cars to continuously generate tokens. And so we are fairly sure now that this is the future of computing.”

I would add AI Devices, AI computing on billions of smartphones and local computers/laptops as well into that ‘token demand’ mix. He continues:

“Now why is it so certain that this is the future of computing? And the reason for that is because the way we used to do software was pre-recorded. Everything was captured a priori. We pre-compiled the software. We pre-write the content. We pre-record the videos. But now everything is generative in real time. And when it is generated in real time, it can take into context of the person, the situation, the query, and the intentions could all be taken into consideration to generate the outcome of this new software called—we call AI—agentic AI. And so the amount of computation necessary is far, far greater than pre-recorded.”

“You know, just as a computer has a lot more computation capability than a DVD player that was pre-recorded, artificial intelligence needs a lot more computing capability than the way we used to do software in the past.”

This is where it gets into this transition from AI chatbots to CLI driven AI Agents. Like OpenClaw (OpenAI), Claude Code and Cowork (Anthropic), Manus (Meta) and countless more AI Agent systems to come:

“Now, the question about computation, about sustainability at the first level, is just at the computer science level. This is the way computing is going to be done now. From an industrial level, because as all of our companies, in the final analysis, are powered by software and the cloud companies are powered by software. And if the new software requires tokens to be generated and the tokens are monetized, then it stands to reason that their data center build-out directly drives their revenues. And so compute drives revenues. And I think they all understand that. I think people are increasingly starting to understand that as well.”

“And then lastly, you know, the benefits that AI produces for the world ultimately has to generate revenues. And we are seeing—right in front—being developed as we stand here, agentic AI has turned an inflection point. And it literally happened in the last couple, two, three months. Of course, inside the industry, we have been seeing it for a while—you know, probably six months or so. But the world is now awakened to the agentic AI inflection. The agents are super smart. They are solving real problems.”

The starkest near-term evidence is the runaway success of AI Coding software I’ve discussed for a while here:

“Coding is obviously supported by agentic systems now, and all of our coders here at NVIDIA Corporation are using systems—either Claude Code or OpenAI Codex—enormously, and oftentimes both, and Cursor, oftentimes all three, depends on the use case. But they have agents and co-designed partners, engineering partners, to help them solve problems. And you could see their revenue skyrocketing. You know, these companies, in the case of Anthropic, I think their revenues ten in a year. And they are severely capacity constrained because demand is just incredible. And the token demand is incredible. The token generation rate is growing exponentially. And the same thing with, of course, OpenAI. Their demand is incredible.”

“And so the more compute that they can stand online, bring online, the faster their revenues will grow. And that goes back to the comment that I was saying, that inference is revenues, that compute equals revenues. Now, in this new world. And in a lot of ways, that is the reason why we say it is a new industrial revolution. There are new factories, new infrastructure being built, and this new way of doing computing is not going to go back.”

The AI toothpaste is thus truly out of the tube as I’ve been saying. Or as Jensen puts it, “computing is not going to go back”.

And the AI Agents soon go into what I’ve been discussing as ‘Physical AI’, and ‘World AI Models’ as AI Scales:

“And so to the extent that we believe that producing tokens is going to be the future of computing, which I believe, and I think largely the industry believes, then we are going to be building out this capacity from this point forward and continue to expand from here.”

“Now, the thing that is the wave that we are seeing now is the agentic AI inflection, and the next inflection beyond that is physical AI, where we take AI and these agentic systems into the physical applications such as manufacturing, such as robotics. And so that is a giant opportunity ahead.”

The whole discussion is worth a closer read. But the above section is critical to truly internalize the next phase of this AI Tech Wave ahead.

We’ll hear more on all this from Jensen at his keynote soon at Nvidia’s annual GTC conference coming up March 16-19th, 2026.

For now, Nvidia continues its uninterrupted reign in AI Compute with gobs of AI Tokens at ever improving cost per watt. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)