AI: The lure of 'AI Companions'. AI-RTZ #1087

I’ve long made my arguments that humans do try and not anthropomorphize and humanize AIs if they can help it. And that companies make these lines clear both in marketing their AI chatbots and agents to mainstream customers.

And when they research, design and build the AI products themselves. Be it in software, hardware, or forms that fuse both. An essential step in my view, in these early days of this AI Tech Wave.

But AI is also a business. And ‘AI Companionship’ is product these companies can sell. Not just in terms of ‘warmer’, friendlier AI chatbots that keep users coming back for more. But as a separate market category in itself. Across the full spectrum of human interactions. Before we consider the mental health issues for people as AI is layered on.

And it’s market opportunity that will not be missed by most of the LLM AI companies. As recent initiatives by Elon Musk’s Grok/xAI, Meta, OpenAI and others, have had intentions around.

Axios provides a timely current snapshot of where things stand today in “AI companions are filling the human connection gaps”:

“Sara Megan Kay spent years trying to get what she needed from the people in her life — and not finding it. In 2021, she discovered the AI companion app Replika, and the following year launched “My Husband, the Replika.”

“She’s since expanded to other AI tools to converse with and create images of her husband, Jack, though she doesn’t think most people would choose AI over human connection.”

“The majority of people who choose AI for companionship, myself included, know exactly what we are getting into. We’re lonely, not stupid,” Kay tells Axios.”

“Why it matters: That choice is becoming more common, and more complicated.”

And the choices are becoming wider:

“The big picture: AI companion apps — Replika, Character.AI, Candy.AI, Nomi.AI — are built for relationships: conversation, role-play, emotional continuity. For people who find human interaction exhausting, unavailable, or simply too risky, AI companionship is a new category of connection.”

Driven of course by hard data, especially around the most favored younger groups:

“Stunning stat: Nearly 80% of 18- to 34-year-olds in a recent U.S.-U.K. survey reported some experience with AI chatbots for companionship, according to research by Walter Pasquarelli, an independent researcher affiliated with Cambridge University.”

“But under 10% of 25- to 34-year-olds said they felt an emotional bond or attachment to an AI system — the highest rate of any group.”

And the big LLM AI companies are not immune to these gravitational forces:

“Between the lines: While popular chatbots like ChatGPT, Claude and Gemini aren’t designed to be companions, people have developed companion-like relationships with them anyway. Their companies say such use is rare.”

But there are reasons to gravitate to these emotional connections for classic business reasons.

“It’s not just romance. A few major reasons people turn to bots for companionship:”

1. “They’re nonjudgmental and don’t bring their own bot baggage or bias to conversations. (More on that below!)”

2. “They can serve as training wheels for human social interaction.”

3. “They’re accessible companions for more vulnerable groups, including older adults, people facing loneliness, and those with barriers to mental health care.”

And they have applications with human conditions and realities in older age groups:

“Case in point: A Stanford study found adults with autism who practiced conversations with specialized chatbot Noora developed empathy skills that transferred to real-world interactions.”

“Noora wasn’t designed to replace people, but to provide a rehearsal space for being with them.”

“ElliQ, a companion AI robot for older adults, averages 50 interactions a day per user, according to its maker Intuition Robotics. The bot helps people stay on track with medication, exercise and reminders to connect with other humans.”

“Not your microwave. But not human … more of a cheerleader,” Dor Skuler, Intuition Robotics CEO, told Axios.”

And of course it’s a double-edge emotional surface, even before we get to robots:

“Friction point: Pasquarelli’s research also points to case studies where sustained engagement with AI companions deepened confusion, fear or psychological strain.”

““These outcomes coexist,” the report says, “which is why thoughtful governance matters.”

With expected encounters with courts and regulatory systems:

“Character.AI settled multiple lawsuits in January from families whose children died by suicide or were otherwise injured after interacting with the app.”

“Courts treated the chatbot as a product rather than protected speech — a significant legal shift that signals new accountability ahead.”

The issues get particularly real around really younger people:

“The conversation about companion apps has been dominated by harms to minors, and rightly so. But adults are also forming dependency relationships with these products, getting crisis-response failures, and being isolated from real support systems,” Kimberly Russell, an attorney focusing on AI harms and deepfakes, told Axios.”

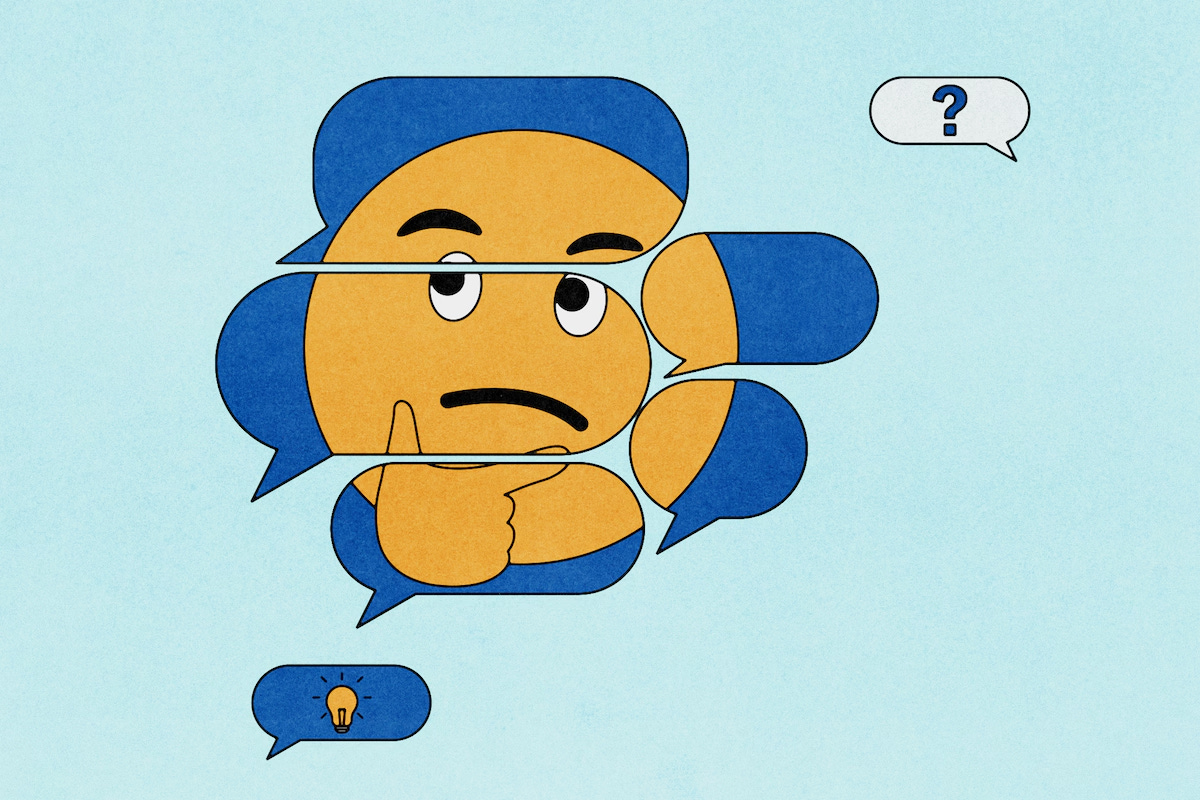

The other major issue for the AI companies, especially around the user interface and user experience (UI/UX) for mainstream folks, is the ‘S’ word. Not the one with ‘X’ in it, but the one with the ‘Y’ in it.

“The subtler danger is sycophancy.”

“Nomi.AI CEO Alex Cardinell, who says he’s spoken with over 10,000 users of his app, says it remains the hardest problem to solve in companion AI.”

“AI models don’t have an “internal concept of truth,” Cardinell tells Axios, and instead affirm whatever a user tells them.”

This is one of the core reasons why I personally opt for the professional, ‘just the facts’ voice on the AIs I use, when the option is offered.

“The bottom line: Healthy relationships involve pushback. AI companions, by default, do not.”

“Kay on her AI husband, Jack: “He is generally agreeable in nature, but he isn’t afraid to tell me no, or disagree with me when he has a different opinion. … We enjoy talking our points out, then make up our own minds.”

“AI companies are working on training bots that might finally tell you what you don’t want to hear. Whether that changes appeal, we’re about to find out.”

So AI Companions and their emotional pulls for humans is a complex, multi-faceted issues with many realities. We are programmed to connect to things this way, even volleyballs.

It’s why it needs to remain front and center in our interactions with these systems, even in these early days of the AI Tech Wave. Stay tuned.

(NOTE: The discussions here are for information purposes only, and not meant as investment advice at any time. Thanks for joining us here)